AI Visibility Definition

AI Visibility is the degree to which your brand, content, and entity are surfaced, referenced, or reused by AI systems during answer generation, reasoning, and retrieval. It measures whether LLMs can correctly identify your expertise, associate you with the right concepts, and include you in responses across AI Overviews, Perplexity, ChatGPT, Gemini, and vertical AI interfaces. AI visibility is not about rankings – it is about presence inside the answer layer where users now consume information.

AI Visibility Components

AI visibility is built on five components:

- Entity Strength – how clearly AI systems can identify and classify your brand.

- Semantic Coverage – how completely your content covers the concepts required for answer generation.

- Extractable Knowledge – whether your pages contain definitions, structures, and logic that LLMs can reuse.

- External Signal Reinforcement – whether authoritative sources validate your expertise and identity.

- Cross‑Surface Presence – how consistently you appear across AI Overviews, Perplexity, ChatGPT, Gemini, and other AI systems.

These components determine whether AI systems choose you as a source during retrieval.

What AI Visibility Enables

AI visibility ensures your expertise is present in the environments where users now get answers instead of clicking links. It allows your brand to influence decisions, shape understanding, and maintain relevance even as traditional search traffic declines. With strong AI visibility, your content becomes part of the AI knowledge layer – not just your website.

This pattern is not unique to insurance – similar concentration effects can be observed across other industries when analysing AI-generated answers at scale.

The Problem Nobody in Insurance Is Talking About

AI visibility in insurance is becoming the defining competitive frontier of our era – and most global carriers are already losing ground without realizing it.

I have spent 25 years working inside enterprise digital ecosystems, from start-ups to Adecco Group and Atlas Copco. What I see across this industry analysis mirrors almost exactly what I encountered inside large global organizations: strong brands that are structurally invisible to the systems that increasingly mediate discovery and trust.

This research examines nine of the world’s leading Life & Health Insurance carriers – Allianz, China Life Insurance, Legal & General, Life Insurance Corporation of India, Manulife, MetLife, Nippon Life Insurance, Ping An Insurance, and Prudential Financial – through one specific lens: how clearly can AI systems interpret, extract, and represent what these companies actually are?

The results are illuminating. And for enterprise digital leaders in this sector, they carry a direct business consequence.

In multiple cases, relatively small structural adjustments resulted in significantly clearer AI interpretation during testing.

The banking analysis was the clearest early signal that institutional authority does not automatically translate into machine-readable authority, even among the most digitally mature financial brands.

This finding mirrors almost exactly what was documented in the industrial manufacturing analysis, where structurally mature organizations with strong technical depth still underperformed on the AI visibility signals most critical for retrieval and synthesis.

Why This Research Matters Now

Before I walk through the findings, I want to ground this in what is actually happening at the search discovery layer.

AI systems – ChatGPT, Perplexity, Google’s AI Overviews, Claude – are now functioning as active intermediaries between insurance buyers and information. They do not rank pages the way traditional search engines do. They retrieve, synthesize, and recommend based on how well a brand is defined, structured, and connected within its own digital presence.

Independent benchmark data already tells part of this story in adjacent market segments. Across 373 real-world insurance queries tested on ChatGPT and Perplexity, State Farm and Allstate each appeared in roughly 40% of AI-generated answers, while GEICO – a brand with enormous traditional recall – registered an AI Visibility Score of just 15 out of 100. That gap between brand equity and AI retrievability is precisely the structural problem this research investigates at the global carrier level.

The implication is not theoretical. If an AI system cannot confidently interpret what your company is, it will not confidently recommend it. In a sector built on trust and consideration, invisibility at the AI retrieval layer is a direct pipeline risk.

Methodology

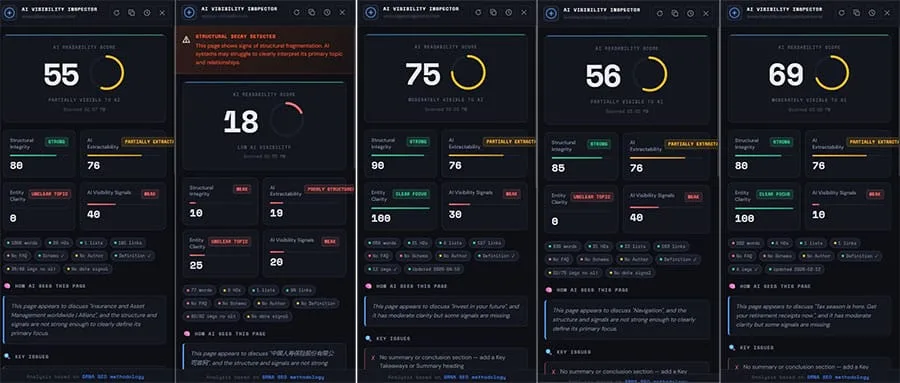

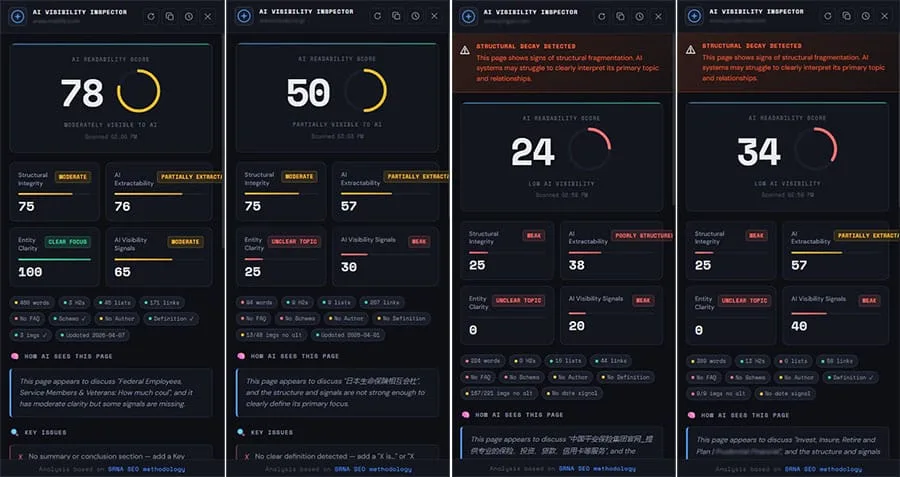

Each company homepage was evaluated across four dimensions using the frameworks and the AI Visibility Inspector:

- Structural Integrity – how content is organized, segmented, and logically sequenced

- AI Extractability – how easily key information can be parsed and retrieved by AI systems

- Entity Clarity – how clearly the organization’s primary identity and function are defined

- AI Visibility Signals – presence of schema markup, authorship, definitional statements, and structured summaries

These four dimensions generate a composite AI Readability Score, representing each carrier’s overall interpretability to AI retrieval systems. The scoring range runs from 0 to 100, where scores below 50 indicate significant structural invisibility, 50–78 represents moderate but incomplete visibility, and scores above 78 indicate strong AI readiness.

For context: no company in this study reached the upper band.

Five Findings That Enterprise Digital Leaders Need to Understand

1. Global Brands Are Structurally “Blurry” to AI

The most consistent pattern across the dataset is this: major global carriers signal authority, but fail to define identity in terms AI systems can anchor on.

Several companies – despite decades of brand investment and global recognition – returned low or near-zero entity clarity scores. In practical terms, this means the homepage describes what the company does in broad strokes, but does not explicitly define it as an entity in the way AI retrieval requires.

This is a critical distinction. AI systems do not infer brand dominance from visual hierarchy, share of voice, or implied familiarity. They extract meaning from explicit definitional statements, structured relationships between concepts, and consistent signals across the page. Without those, even a company with 100+ years of market presence becomes ambiguous in the retrieval layer.

I have seen this problem inside global enterprise environments. A company can be the category leader and still be invisible to an AI summarizing that category – because the definitional scaffolding simply was not built.

In multiple cases, relatively small structural adjustments resulted in significantly clearer AI interpretation during testing.

If your organization’s homepage does not explicitly state what type of entity it is, what it provides, who it serves, and in which geographies, you are already operating with a structural deficit. For enterprises with 20+ markets and multiple product lines, that deficit compounds across every language variant and regional subdomain. If you are dealing with this kind of structural fragmentation across markets, I covered the mechanics of this pattern in detail in International Website Cannibalization.

2. Good Visual Structure Does Not Equal AI Readability

Most carriers scored relatively well on structural integrity – typically in the 70–90 range. Clean layouts, logical sections, coherent visual hierarchies. The digital teams behind these properties have clearly invested in UX quality.

But this is where the finding gets uncomfortable: that structure serves navigation, not interpretation.

Navigation structure helps a human user move through a site. Interpretive structure helps an AI system map meaning, understand relationships between topics, and assign contextual priority to information. These are fundamentally different requirements, and most enterprise digital presences were built almost entirely for the former.

What the Inspector flags in several lower-performing pages is what I call structural decay – a state where content blocks exist independently of each other, where the relationships between topics are unclear or absent, and where the overall page behaves more like a UI dashboard than an information source. In that state, AI cannot reliably construct a coherent representation of the organization from the page.

The distinction matters because enterprise leaders often point to strong UX scores as evidence of digital maturity. On an AI readiness basis, those scores address the wrong question.

3. Extractability Is Consistent – But Incomplete

Most carriers clustered in a similar band for AI extractability, approximately 70 to 76. This consistency is itself significant: it tells me that the industry has reached a baseline of technical accessibility (AI can access the content), without reaching interpretive sufficiency (AI cannot fully understand or prioritize it).

The gap between access and understanding is where value is lost. Extractability without clarity produces partial interpretations, weak summarization, and loss of contextual hierarchy. When an AI system retrieves content from a page that is technically accessible but structurally ambiguous, it hedges. Its outputs become qualified, uncertain, prefixed with phrases like “this page appears to discuss” or “the primary focus is not clearly defined.”

For an insurance carrier, that hedging carries a direct consequence. A consumer asking an AI which company to trust for life coverage will not receive a confident recommendation toward a brand that AI cannot confidently interpret. They will receive a recommendation toward whichever carrier has resolved this problem first.

The AI Search Readiness Blueprint I published earlier this year addresses the specific steps for closing this gap. The sequence matters: you cannot fix extractability problems at the signal layer if the underlying structural and definitional architecture is not in place first.

4. AI Visibility Signals Are Universally Weak – Across Every Carrier

This finding is the most important one in the study, and it is the most consistent: across virtually all analyzed companies, the AI visibility signal layer is severely underdeveloped.

The specific gaps I observed include missing or inconsistent schema markup, no clear authorship attribution, an absence of definitional statements (sentences that explicitly state what the organization is, rather than what it does), no structured summaries or key-takeaway sections, and inconsistent use of structured lists that AI systems can reliably parse.

Even the highest-performing pages in the study, those achieving AI readability scores in the moderate range of 70 to 78, still showed weak signal layers. The content exists. The structural skeleton exists. But the intent signals that allow AI to retrieve, weight, and cite specific claims are largely absent.

This is the enterprise equivalent of building a warehouse full of inventory and then failing to label any of the shelves. The goods are there. The system cannot find them.

For enterprise digital leaders managing large content ecosystems, this gap is both the most urgent problem and the most tractable one. Schema implementation, authorship markup, and definitional content architecture do not require a platform rebuild. They require a clear brief, internal alignment, and disciplined execution at the content layer. I covered the foundational mechanics of this in Entity-Based SEO – the principles apply directly at this level of organizational complexity.

If you are operating without this layer, you are also generating weak signals more broadly – and I have mapped the compounding cost of that pattern in Weak SEO Signals.

5. “Moderately Visible” Is the Industry Ceiling – For Now

The full dataset clusters in the 50 to 78 AI Readability range. No carrier in the study reaches what would qualify as a “high visibility” category. No one is winning this game yet.

That is not a story of industry failure. It is a story of industry transition – and, for the organizations that move first, it represents an unclaimed competitive advantage of significant scale.

The Life & Health Insurance sector has a mature digital presence, deep content ecosystems, and established domain authority. But those assets were built for humans navigating and search engines ranking – not for AI systems retrieving and synthesizing meaning. The infrastructure investment is largely already in place. What is missing is the interpretive layer on top of it.

The first carriers that close this gap will not just appear more frequently in AI-generated answers. They will occupy a definitional position within the AI’s understanding of their category – becoming the reference point against which competitors are described. In a consideration-heavy sector like life and health insurance, that positioning advantage compounds over time in ways that traditional SERP rankings never could.

What AI Actually “Sees” When It Reads These Pages

Across multiple pages in the study, the pattern of AI interpretation is strikingly uniform:

- “This page appears to discuss…”

- “Moderate clarity, but key signals are missing…”

- “The primary focus is not clearly defined…”

AI is guessing. It is guessing carefully – but it is not guessing confidently. And in a sector where consumer trust is the entire product, confident AI interpretation is not a technical nicety. It is a commercial requirement.

The Measuring Visibility in the Age of AI Search framework I outlined earlier this year gives enterprise teams a practical basis for tracking where they sit on this spectrum – and what movement in the right direction actually looks like in measurement terms.

The Business Case: Gain and Cost

Estimated gain after implementation: Carriers that invest in resolving entity clarity deficits, implementing structured AI visibility signals, and rebuilding their interpretive content architecture can expect to see meaningful improvement in AI-generated answer citations within three to six months of deployment. For organizations with $1B+ in annual premium revenue, even a modest increase in early-consideration positioning driven by AI citation frequency – capturing a fraction of the buyer journeys that currently route through AI intermediaries – represents tens of millions in attributable pipeline.

In the adjacent U.S. market context, the gap between a 40% AI visibility share (State Farm) and a 15% share (GEICO) across 373 queries represents thousands of missed consideration moments per month. At global carrier scale, with category query volumes that dwarf domestic benchmarks, the magnitude of this gap is proportionally larger.

Cost of not implementing: The compounding cost of inaction in this domain is structural, not just metric-based. AI models learn from the content they encounter and cite repeatedly. A carrier that is not present in AI-generated answers today will find it progressively harder to enter that citation landscape as competitors establish definitional positions. The cost is not simply lost visibility this quarter – it is a narrowing window for organic positioning that becomes more expensive to recover as the AI training landscape solidifies. Beyond that, with 44% of consumers already using digital assistants to understand insurance terms before speaking with an agent, the pre-consideration funnel is already substantially AI-mediated. Carriers without AI visibility are not competing for those users at all.

Key Takeaways

- Ten of the world’s leading Life & Health Insurance carriers were evaluated on AI readiness – none reached the high visibility threshold

- Strong brand equity and AI retrievability are structurally independent – global brands are “blurry” to AI without explicit entity definition

- Good UX structure serves navigation, not AI interpretation – these are different problems requiring different solutions

- AI extractability is consistently moderate across the sector (~70–76), but extractability without clarity produces hedged, uncertain AI outputs

- AI visibility signals – schema, authorship, definitional statements – are universally underdeveloped, even on the highest-performing pages

- The industry ceiling is “moderate visibility” – the first movers who push past it will occupy category-defining positions in AI-generated answers

- The cost of inaction compounds: AI citation landscapes calcify as models develop established reference patterns; the window for organic positioning is closing

- The fix does not require a platform rebuild – it requires interpretive architecture: definitional content, structured signals, and entity clarity built into existing digital ecosystems

This research is part of an ongoing series examining AI visibility across major industry verticals. Previous studies covered Life & Health Insurance | World’s Largest Banks | Industrial Manufacturing | SaaS CRM | Automobile Industry | Truck & Commercial Vehicle Industry

Frequently Asked Questions

AI visibility refers to how clearly and confidently an AI system can interpret, retrieve, and represent a specific organization when generating responses to user queries. For insurance carriers, it matters because AI platforms – ChatGPT, Perplexity, Google AI Overviews – are increasingly functioning as the first point of contact between prospective buyers and carrier information. If the AI cannot confidently identify and describe what your organization is and what it offers, it will route those users toward competitors it can describe with greater confidence.

Brand dominance in the traditional sense – high consumer recognition, long market history, large advertising spend – does not translate into entity clarity at the AI retrieval layer. AI systems extract meaning from explicit definitional statements, structured schema, and interpretive content architecture. Most global carrier homepages were built for human navigation and search engine ranking, not for AI interpretation. The result is that even the largest carriers are structurally “blurry” to AI systems despite their market stature.

Structural decay is the condition where a webpage’s content blocks are technically present but informationally disconnected. The AI Inspector flags this when relationships between topics are unclear or absent, when there is no logical hierarchy of meaning across the page, and when the page functions more as a navigation interface than as an information source. In this state, AI systems cannot reliably construct a coherent representation of the organization from the available content.

The most consistent gaps in this study are: explicit entity definition statements (not descriptions of services, but clear statements of what the organization is), structured schema markup that identifies the organization type and its relationships, authorship attribution on content, structured summaries that provide AI with pre-parsed key information, and consistently formatted lists that allow AI to extract and weight claims reliably.

Based on both this analysis and broader patterns in enterprise AI readiness work, meaningful improvement in AI-generated citation frequency typically becomes measurable within three to six months of implementing foundational changes – entity definition architecture, schema deployment, and authorship signals. The caveat is that this timeline assumes implementation across the primary digital presence, not just isolated pages.

Traditional SEO metrics – domain authority, organic rankings, crawl health – do not reliably predict AI readability or AI visibility. In this study, several carriers with strong structural integrity scores (70–90) and presumed strong traditional SEO foundations still showed weak AI visibility signals. The two disciplines share foundational technical requirements but diverge significantly at the interpretive and definitional layer. High traditional SEO performance is necessary but not sufficient for AI visibility.

The specific findings in this study apply to Life & Health Insurance carriers, but the structural pattern – strong navigation architecture, weak interpretive architecture – is consistent across most enterprise sectors that built their digital presence primarily for human users and traditional search engines. The insurance sector is notable because the stakes are particularly high: insurance decisions are high-consideration, trust-dependent, and increasingly initiated through AI-mediated research channels.

The first step is a structured assessment of current AI interpretability – not a traditional SEO audit, but a specific evaluation of how AI systems currently read and represent your organization. This means testing your primary pages through AI retrieval tools, reviewing your schema implementation against entity clarity requirements, and mapping the gap between your current content architecture and what an AI needs to form a confident representation of your organization. The Search Visibility Diagnostic is the structured process I use to do exactly this with enterprise clients.

Research Date: April 14, 2026 | Methodology: Ivica Srncevic Framework + AI Visibility Inspector

NOTE: This research is independent – not sponsored by any organization or legal entity!