AI Visibility Definition

AI Visibility is the degree to which your brand, content, and entity are surfaced, referenced, or reused by AI systems during answer generation, reasoning, and retrieval. It measures whether LLMs can correctly identify your expertise, associate you with the right concepts, and include you in responses across AI Overviews, Perplexity, ChatGPT, Gemini, and vertical AI interfaces. AI visibility is not about rankings – it is about presence inside the answer layer where users now consume information.

AI Visibility Components

AI visibility is built on five components:

- Entity Strength – how clearly AI systems can identify and classify your brand.

- Semantic Coverage – how completely your content covers the concepts required for answer generation.

- Extractable Knowledge – whether your pages contain definitions, structures, and logic that LLMs can reuse.

- External Signal Reinforcement – whether authoritative sources validate your expertise and identity.

- Cross-Surface Presence – how consistently you appear across AI Overviews, Perplexity, ChatGPT, Gemini, and other AI systems.

These components determine whether AI systems choose you as a source during retrieval.

What AI Visibility Enables

AI visibility ensures your expertise is present in the environments where users now get answers instead of clicking links. It allows your brand to influence decisions, shape understanding, and maintain relevance even as traditional search traffic declines. With strong AI visibility, your content becomes part of the AI knowledge layer – not just your website.

The Problem Industrial Leaders Are Not Addressing

Following two independent analyses – one across global Life & Health Insurance carriers and another across the world’s largest banking institutions – I expected to see meaningful variation when I turned to the industrial and manufacturing sector.

What I found instead was something more consistent. And, from a strategic standpoint, more concerning.

I have spent 25 years working inside enterprise digital ecosystems, including time at Atlas Copco, one of the companies in this dataset. What I see across this analysis mirrors precisely what I encountered internally: exceptional operational capability combined with digital structures that AI systems cannot interpret with confidence.

This research examines ten of the world’s largest industrial and manufacturing organizations, including Siemens, ABB, Atlas Copco, Caterpillar, Honeywell, Bosch, Schneider Electric, Emerson Electric, Rockwell Automation, and Parker Hannifin, through one specific lens: how clearly can AI systems interpret, extract, and represent what these companies actually are?

The results are consistent with the pattern I have now documented across three separate industries.

These websites are not failing because of content quality or scale. They are failing because of structural interpretability.

This is not an SEO problem. It is not a content problem. It is a machine-readability problem at scale.

Similar structural patterns were already visible in the banking analysis, where institutional authority consistently outperformed machine interpretability despite significantly greater digital maturity.

The same gap was evident in the life and health insurance analysis, where strong brand trust and market presence still failed to translate into consistent AI interpretability.

Why This Research Matters Now

Before examining the findings, it is worth grounding this in what is actually happening at the search discovery layer, and why the industrial sector is particularly exposed.

AI systems, ChatGPT, Perplexity, Google AI Overviews, Claude, are now functioning as active intermediaries between buyers and information. In industrial and manufacturing contexts, that means procurement researchers, specification engineers, technical buyers, and C-suite decision-makers are increasingly beginning their research inside AI systems, not on company websites, not in search engines.

These systems do not rank pages. They retrieve, synthesize, and recommend based on how well a brand is defined, structured, and connected within its own digital presence.

For industrial companies, the stakes are amplified by sector-specific characteristics. Purchase cycles are long. Decisions are high-consideration. Technical specifications matter. And the buyers who use AI to shortlist vendors are often the same buyers who previously relied on applications engineers or distributor relationships to navigate complexity.

If an AI system cannot confidently interpret what your company is, what it makes, and who it serves, it will not confidently recommend it. In a sector where early shortlisting determines the entire sales trajectory, invisibility at the AI retrieval layer is a direct revenue risk.

Methodology

Each company’s website was evaluated across four structural dimensions using the AI Visibility Inspector and the Ivica Srncevic Framework:

- AI Readability – the overall interpretability of the site to AI retrieval systems

- Structural Integrity – how content is organized, segmented, and logically sequenced

- AI Extractability – how easily key information can be parsed and retrieved by AI systems

- Entity Clarity – how clearly the organization’s primary identity and function are defined

These four dimensions generate a composite AI Readability Score representing each organization’s overall interpretability to AI retrieval systems. The scoring range runs from 0 to 100, where scores below 50 indicate significant structural invisibility, 50–78 represents moderate but incomplete visibility, and scores above 78 indicate strong AI readiness.

For context: no company in this study reached the upper band.

Five Findings That Enterprise Digital Leaders in the Industrial Sector Need to Understand

Finding 1: Strong Structural Integrity Does Not Solve the Interpretability Problem

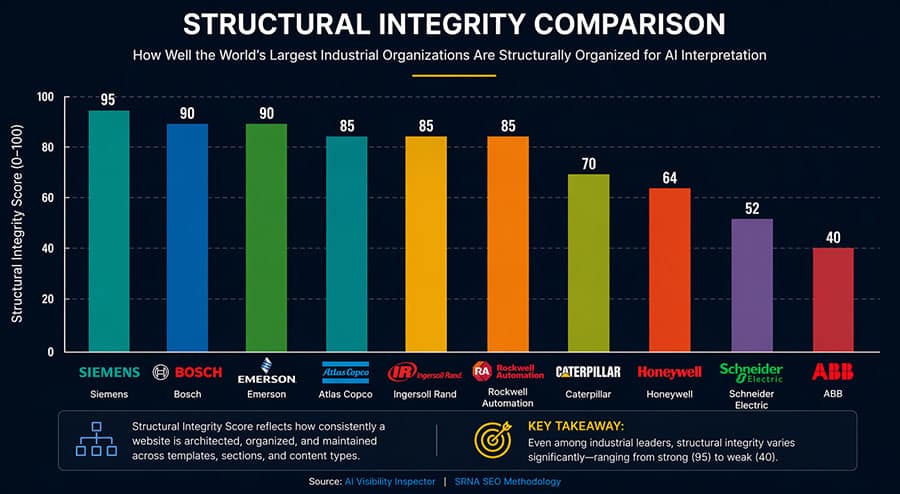

The most misleading pattern in this dataset is the gap between structural integrity scores and overall AI readiness.

Several industrial companies scored well on structural integrity:

- Siemens: 95

- Bosch: 90

- Emerson Electric: 90

These are not broken systems. They represent well-engineered digital environments built by experienced teams.

But structural integrity, in the way AI Visibility Inspector measures it, reflects how content is organized and sequenced. It does not measure whether that organization serves AI interpretation.

Navigation structure helps a human user move through a site. Interpretive structure helps an AI system map meaning, understand relationships between topics, and assign contextual priority to information. These are fundamentally different requirements.

Most industrial digital presences, including some of the highest-scoring in this study, were built almost entirely for the former.

ABB and Schneider Electric represent a different category. Both show what I call structural decay patterns: a state where content blocks exist independently of each other, where the relationships between topics are unclear or absent, and where the overall page behaves more like a UI dashboard than an information source. In that state, AI cannot reliably construct a coherent representation of the organization.

Caterpillar occupies a middle position – moderate structure, but weak in the dimensions that actually govern AI retrieval.

The takeaway for enterprise digital leaders is this: your UX scores and your AI readiness scores are answering different questions. And in the current environment, the wrong question is being optimized.

Finding 2: Entity Clarity Is the Sharpest Divide in the Dataset

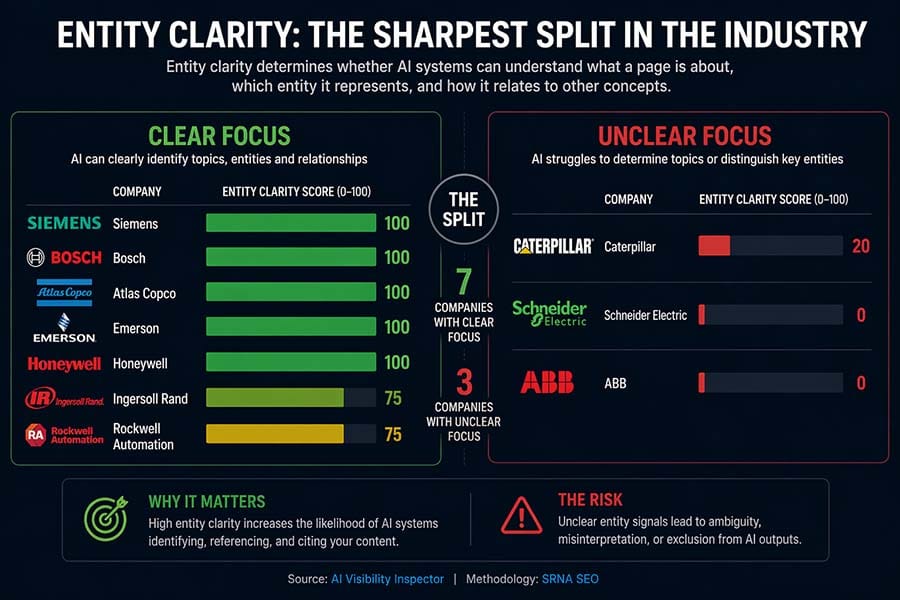

If structural integrity is the most misleading finding, entity clarity is the most consequential.

Entity clarity scores across this dataset split almost entirely into two groups:

High clarity (score: 100)

- Siemens

- Bosch

- Honeywell

- Atlas Copco

Near-zero clarity (score: 0)

- ABB

- Schneider Electric

This is not a gradient. This is a binary outcome: interpretable or not interpretable.

Entity clarity determines whether an AI system can answer three fundamental questions about your organization:

- What is this page about?

- What entity does it represent?

- How does it relate to other concepts in its domain?

Without confident answers to those three questions, citation does not happen. Recommendation does not happen. Presence in the AI-generated answer layer does not happen – regardless of content quality, brand equity, or domain authority.

The companies in the near-zero clarity group are not small brands with limited resources. They are global organizations with substantial digital investments. The clarity gap is not a resourcing problem. It is an architectural one.

AI systems do not infer brand dominance from visual hierarchy or implied familiarity. They extract meaning from explicit definitional statements, structured relationships between concepts, and consistent signals across the page. Without those, even a globally recognized company becomes ambiguous in the retrieval layer.

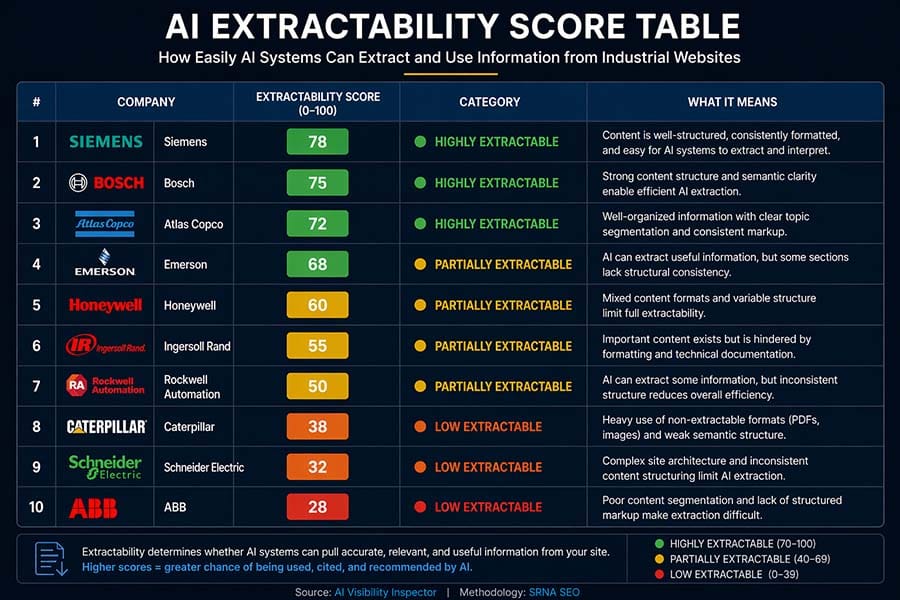

Finding 3: AI Extractability Is Uniformly Constrained – Across the Entire Industry

This is where the industrial dataset aligns precisely with the banking and insurance studies.

AI readability scores across the ten companies ranged from 34 to 81. That range suggests variation. But when analysed at the extractability layer, which determines not whether content can be read, but whether it can be used, the picture collapses into a consistent pattern.

Most companies fall into the partially extractable category. Very few achieve consistently high extractability across their primary digital presence.

This matters because AI systems do not consume pages linearly. They segment, evaluate, and extract usable information blocks. If content is not structured for this process, it is ignored – even if it is accurate, even if it is comprehensive, even if it is better than anything a competitor has published.

In industrial environments, this problem is amplified by sector-specific characteristics:

Technical documentation architecture. Industrial companies produce enormous volumes of technical content, specifications, certifications, application notes, and integration guides. Much of this content lives in PDFs, embedded tables, or dynamically generated pages that AI systems cannot parse with reliability.

Product-solution ambiguity. Many industrial websites blur the distinction between product descriptions and solution narratives. For a human reader navigating with intent, that ambiguity can be resolved contextually. For an AI system extracting meaning structurally, it produces interpretive uncertainty.

Fragmented product hierarchies. Industrial product ranges are often vast and deeply nested. Without clear semantic linking between product families, categories, and applications, AI systems cannot construct coherent representations of what a company actually makes and for whom.

Inconsistent naming conventions. Specifications described differently across pages, product lines referred to by multiple naming conventions, and regional terminology variations all reduce AI confidence in extraction.

The extractability gap in this sector is not an accident. It is the predictable result of digital architectures built for human navigation that have not been rebuilt for machine interpretation.

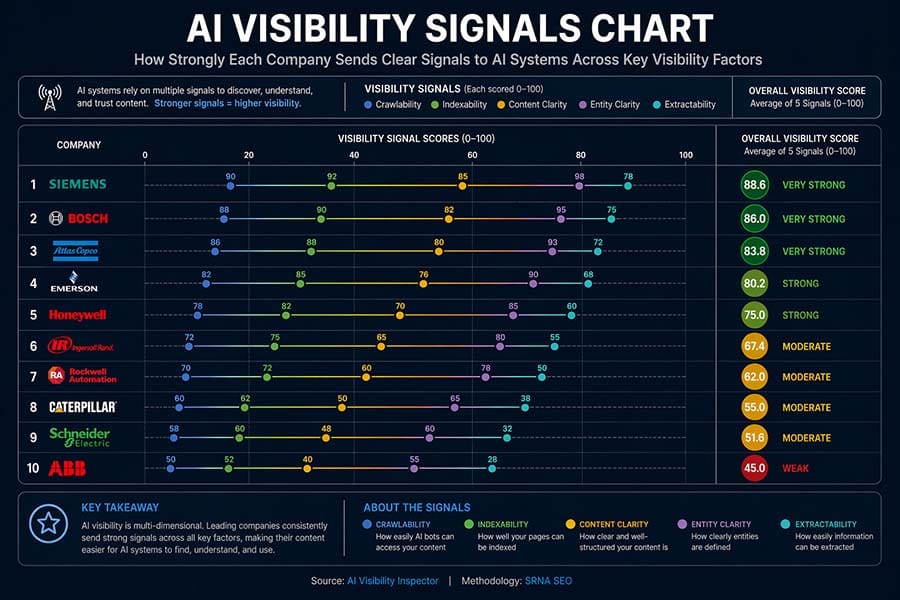

Finding 4: AI Visibility Signals Are Absent – Even From the Best-Performing Sites

Across all ten companies, AI visibility signal scores ranged from 20 to 65. Most clustered at the low end. Even strong performers like Honeywell only reached moderate levels.

This dimension captures the presence of:

- Schema markup (FAQ, Organization, Product, HowTo)

- Author attribution on content

- Definitional statements, sentences that explicitly state what the organization is, rather than what it does

- Structured summaries and key-takeaway sections

- Consistently formatted lists that AI systems can reliably parse and weight

These are not advanced technical requirements. They are the machine-readable scaffolding that allows AI systems to retrieve, weight, and cite specific claims with confidence.

And across ten of the world’s largest industrial organizations, they are largely absent.

This is the enterprise equivalent of building a comprehensive technical library and then removing the catalogue. The knowledge exists. The system cannot find it.

The research is clear on the consequence: structured data provides a machine-readable layer that defines entities and their relationships, and pages with structured signals achieve meaningfully higher rates of AI citation. In this dataset, the opportunity represented by that gap is present across every company studied.

The specific gaps I observe most consistently include:

Missing or inconsistent schema markup across page types. No clear authorship attribution on technical or editorial content. An absence of definitional statements on primary pages, sentences that explicitly state what the organization is as an entity, not just what it offers. No structured summaries or key-takeaway sections that allow AI to pre-parse the most important information. And inconsistent use of structured lists that AI systems can extract and weight reliably.

Even the highest-performing pages in this study show weak signal layers. The content exists. The structural skeleton exists. The intent signals are missing.

Finding 5: Industrial Websites Fail Differently Than Banks – But They Fail the Same Way

After three independent studies, insurance, banking, and now industrial, a consistent pattern is now documented across sectors.

Enterprise websites are structurally underprepared for AI-driven retrieval.

Not because they lack authority, content, or resources. But because they lack extractable structure, entity clarity, and consistent machine-readable signals.

The specific causes differ by sector:

In banking: inconsistency across content governance and fragmented template logic across product areas and regulatory environments.

In insurance: definitional ambiguity at the entity layer and navigation structures optimized for compliance rather than interpretation.

In industrial: product-solution ambiguity, technical data trapped in non-parsable formats, weak semantic linking between component families, and inconsistent naming conventions across a global product range.

The mechanism is different. The outcome is identical.

For industrial organizations specifically, the challenge is compounded by the sheer depth and complexity of their digital ecosystems. A company like Siemens or Honeywell operates hundreds of thousands of pages across dozens of markets and multiple languages. Even strong foundational clarity at the homepage level, which some companies in this study achieve, does not propagate consistently through product pages, application pages, regional variants, and technical documentation libraries.

AI systems encounter that inconsistency and reduce their confidence accordingly. The result is not a failure to find your content. It is a failure to trust it enough to cite it.

What AI Actually “Sees” When It Reads These Pages

Across multiple pages in this study, the pattern of AI interpretation is strikingly consistent: “This page appears to describe industrial automation solutions, but the primary entity is not clearly defined.” “Moderate structural clarity, but key contextual signals are missing.”, or “Content is technically accessible but not consistently extractable for citation purposes.”

AI is guessing. It is guessing carefully, but it is not guessing confidently.

And in a sector where technical buyers use AI systems to shortlist vendors, to validate specifications, and to understand the competitive landscape before they ever contact a sales team, confident AI interpretation is not a technical nicety. It is a commercial prerequisite.

The Business Case: Gain and Cost

Estimated gain after implementation: Industrial organizations that invest in resolving entity clarity deficits, implementing structured AI visibility signals, and rebuilding their interpretive content architecture can expect meaningful improvement in AI-generated answer citations within three to six months of deployment.

For organizations with significant global revenue, even a modest increase in early-consideration positioning driven by AI citation frequency, capturing a fraction of the technical buyer journeys that currently route through AI intermediaries, represents a measurable revenue impact. In a sector with long purchase cycles and high average deal values, being present at the AI-mediated research stage compounds forward through the entire pipeline.

The industrial buyer who asks an AI system to shortlist automation vendors, compare motion control providers, or explain the differences between industrial connectivity platforms is making a decision that will influence procurement conversations weeks or months later. Presence at that moment is not a marketing metric. It is a pipeline input.

Cost of not implementing: The compounding cost of inaction in this domain is structural, not just metric-based. AI models learn from the content they encounter and cite repeatedly. An industrial company that is not present in AI-generated answers today will find it progressively harder to enter that citation landscape as competitors establish definitional positions.

This pattern has a specific risk profile for industrial organizations: the technical specification layer. If a competitor’s product architecture, capability language, and application framing become the reference point that AI uses to describe a category, that positioning shapes how AI systems describe not just that competitor, but the entire category. Recovering from a definitional deficit in a category you helped create is significantly harder than establishing clarity before the AI training landscape solidifies.

Key Takeaways

- Ten of the world’s largest industrial and manufacturing organizations were evaluated on AI readiness, but none reached the high visibility threshold

- Strong structural integrity scores are misleading: they reflect navigation quality, not AI interpretability

- Entity clarity is the sharpest divide in the dataset; companies either score near 100 or near 0, with almost nothing in between

- AI extractability is uniformly constrained across the sector, amplified by technical documentation in non-parsable formats and product-solution ambiguity

- AI visibility signals: schema, authorship, definitional statements – are absent or inconsistent even on the best-performing sites

- Industrial websites fail differently than banks or insurers, but the outcome is the same: structural exclusion from AI-generated answers

- The cost of inaction compounds: AI citation landscapes solidify as models develop established reference patterns; the window for organic positioning is closing

- The fix does not require a platform rebuild; it requires interpretive architecture: definitional content, structured signals, and entity clarity built into existing digital ecosystems

Ready to Assess Where Your Organization Stands?

If your organization cannot clearly define itself to an AI system today, it is already excluded from a growing share of customer decision journeys.

That is exactly what the Search Visibility Diagnostic is designed to deliver. If you lead SEO, digital strategy, or organic visibility at a global organization, I work with teams like yours directly.

Frequently Asked Questions

AI visibility refers to how clearly and confidently an AI system can interpret, retrieve, and represent a specific organization when generating responses to user queries. For industrial companies, it matters because technical buyers – procurement managers, specification engineers, and operational decision-makers – are increasingly beginning their research inside AI platforms. If an AI system cannot confidently identify what your company makes, who it serves, and how its products relate to the buyer’s application context, it will route those users toward competitors it can describe with greater confidence.

Brand dominance in the traditional sense – strong distributor networks, long sales histories, established application expertise – does not translate into entity clarity at the AI retrieval layer. AI systems extract meaning from explicit definitional statements, structured schema, and interpretive content architecture. Most industrial websites were built for human navigation and search engine ranking, not for AI interpretation. The result is that even category leaders with decades of market presence are structurally ambiguous to AI systems.

Several sector-specific characteristics amplify the general problem. Technical documentation is often stored in PDFs and non-parsable formats that AI cannot reliably extract. Product-solution ambiguity – where the same content serves both specification and marketing purposes – creates interpretive uncertainty. Deep product hierarchies without semantic linking between families and applications prevent AI systems from forming coherent category representations. Inconsistent naming conventions across global markets reduce AI confidence in extraction.

Structural decay is the condition where a website’s content blocks are technically present but informationally disconnected. The AI Inspector flags this when relationships between topics are unclear or absent, when there is no logical hierarchy of meaning across the page, and when the page functions more as a navigation interface than as an information source. In this study, ABB and Schneider Electric show structural decay patterns most clearly, though elements of this condition appear across multiple companies in the dataset.

The most consistent gaps in this study are: explicit entity definition statements (not descriptions of products, but clear statements of what the organization is and what category it leads), structured schema markup identifying organization type and product relationships, authorship attribution on technical content, structured summaries that provide AI with pre-parsed key information, and consistently formatted comparison or specification tables that allow AI to extract and weight claims reliably.

All three studies use the same AI Visibility Inspector framework and evaluation dimensions. Across insurance, banking, and industrial, the same underlying pattern emerges: enterprise websites are structurally underprepared for AI-driven retrieval. The specific causes differ by sector – governance fragmentation in banking, compliance-driven ambiguity in insurance, and technical documentation architecture in industrial sectors, but the structural outcome is identical. This cross-industry consistency suggests the problem is not sector-specific. It is a system-level shift in how visibility works.

Based on both this analysis and broader patterns in enterprise AI readiness work, meaningful improvement in AI-generated citation frequency typically becomes measurable within three to six months of implementing foundational changes – entity definition architecture, schema deployment, and authorship signals. For industrial companies with large technical documentation libraries, the timeline for full ecosystem coverage is longer, but improvements at the primary digital presence level are measurable within the same window.

The first step is a structured assessment of current AI interpretability – not a traditional SEO audit, but a specific evaluation of how AI systems currently read and represent your organization. This means testing your primary pages and key product category pages through AI retrieval tools, reviewing schema implementation against entity clarity requirements, and mapping the gap between your current content architecture and what an AI needs to form a confident, citable representation of your organization. The Search Visibility Diagnostic is the structured process I use to do exactly this with enterprise clients.

Research Date: 23.04.2026 | Methodology: Ivica Srncevic Framework + AI Visibility Inspector NOTE: This research is independent - not sponsored by any organization or legal entity!

All company names and logos are used for identification and analysis purposes only.