The Problem Is Not What Banks Think It Is

When I ran an AI visibility analysis across 10 of the world’s largest commercial banking institutions, I expected to find a performance gap. What I found instead was something more fundamental: a structural eligibility problem that no ranking strategy can fix.

This is not a study about who ranks higher. It’s an audit of whether these institutions’ websites are even capable of being surfaced in AI-generated responses – the kind that now drive an increasing share of financial discovery decisions before a consumer ever visits a website.

Research shows that 60% of U.S. adults now use AI-powered search to find financial information, and Gartner projects that traditional search volume will drop by 25% by 2026 as users increasingly turn to AI chatbots. That is not a trend. That is a structural shift in how financial products get discovered, evaluated, and chosen.

The banks in this analysis – JPMorgan Chase, Bank of America, Morgan Stanley, Goldman Sachs, HSBC, Royal Bank of Canada, Commonwealth Bank, HDFC Bank, ICBC, and Mitsubishi UFJ Financial Group – collectively manage trillions in assets. And most of them, based on our AI Visibility Inspector analysis, are operating with a critical blind spot.

The same structural pattern appears in the life and health insurance analysis, where strong institutional trust and market authority still failed to translate into consistent AI interpretability.

A similar visibility gap was later confirmed in the industrial manufacturing analysis, where technically sophisticated global organizations remained structurally weaker than expected in AI retrieval environments.

What We Measured – and Why It Matters

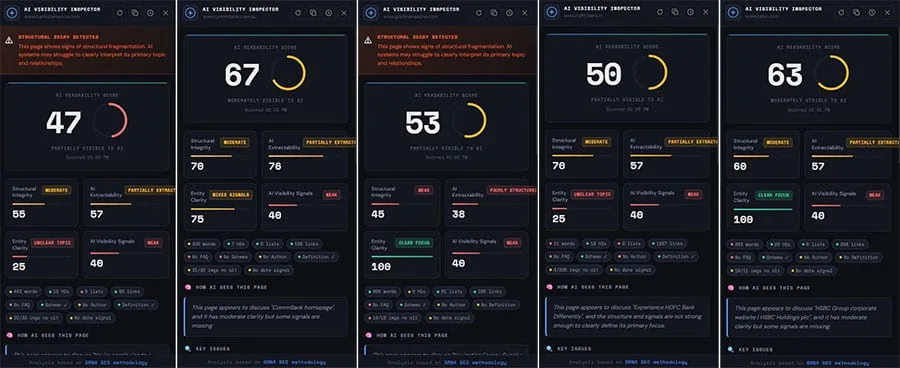

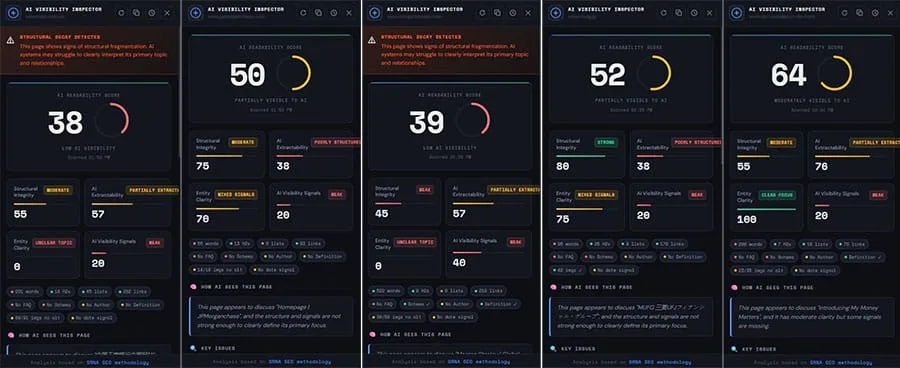

The AI Visibility Inspector evaluates each website across four structural dimensions: AI Readability, Structural Integrity, AI Extractability, and Entity Clarity. These are not vanity metrics. They represent the functional criteria AI retrieval systems use when deciding what content to surface, cite, or ignore.

Visibility no longer starts with a search engine results page. It starts with the answer itself – and if your information is incomplete, inconsistent, or harder to interpret than a competitor’s, the model will choose the clearer source. That is the operating reality for every institution in this dataset.

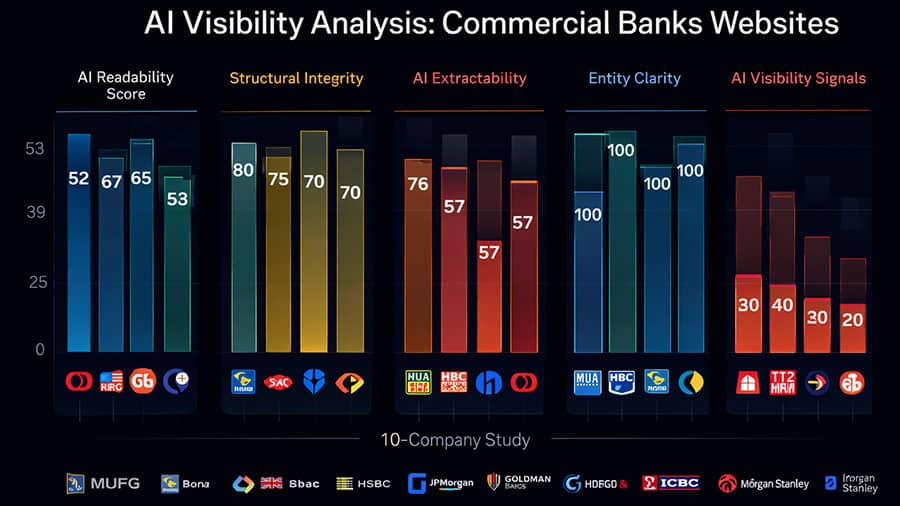

The overall AI readability scores ranged from 38 to 67, with an average of approximately 53. Most banks fall into what I call “partial visibility” territory. They are not invisible – but they are not reliably retrievable either. In AI-driven search, that distinction carries enormous consequences.

Structural Integrity: Technically Acceptable, Strategically Inconsistent

The structural integrity scores ranged from 45 to 80. MUFG scored highest at 80, JPMorgan at 75.

The pattern here is instructive. Most banks are not structurally broken. Their core architecture is technically acceptable. The problem is inconsistency – templates that work on some pages and fail on others, structural logic that does not carry through across sections. For an AI system attempting to interpret a website as a coherent knowledge source, inconsistency is as damaging as a complete failure.

For banks, the shift to SEO, AEO, GEO, and agentic commerce is both a challenge and an opening. The cost of inaction could be steep: reduced visibility, loss of customer engagement, and being overshadowed by more tech-savvy rivals – or even tech companies encroaching on financial services. Structural inconsistency accelerates exactly that risk.

If you manage digital or SEO at a financial institution, I would encourage you to read my analysis on structural decay in enterprise SEO – because what I found in this banking dataset mirrors what I have seen in large-scale enterprise environments more broadly.

AI Extractability: The Critical Failure No One Is Talking About

The extractability scores tell the most alarming story in this dataset. The range is 38 to 76. RBC and Commonwealth Bank both scored 76, the strongest performers.

JPMorgan Chase manages over $3.9 trillion in assets. It scored 75 on structural integrity. Yet it scored 38 on AI extractability. That gap is the whole story.

Content can exist and still be invisible to AI. Extractability is not about having information on a page – it is about whether an AI system can reliably parse, interpret, and cite that information when constructing an answer. Pages in this analysis were flagged as “poorly structured” and “partially extractable” across multiple institutions. The content is there. The machine cannot reliably use it.

More than 60% of citations in AI-generated financial responses came from publishers or affiliate sites rather than the financial institutions themselves. The content ecosystem around a brand now matters as much as the brand’s own website. That statistic should stop every Head of Digital in financial services in their tracks. Third-party publishers, not the banks themselves, are winning AI citations in the institutions’ own product categories.

The cost of this gap is not theoretical. In Q1 2026, analysis of 5,600 ChatGPT responses across nine financial services categories found that just 10 digital banking brands controlled AI-generated recommendations – with most traditional institutions absent from that list entirely. Every AI response that references a competitor or a third-party publisher instead of the bank is a discovery moment permanently lost. At scale, that compounds into a measurable revenue impact.

For teams already working on this problem, my AI Search Readiness Blueprint covers the structural requirements in detail.

The Citation Paradox: Why Media Brands Outperform Global Banks

Our analysis reveals a startling displacement in the financial knowledge graph. While banks own the assets, they no longer own the answers. Over 60% of citations in AI-generated financial responses are currently sourced from high-authority content publishers like NerdWallet, Investopedia, and Reuters, rather than the banks’ own product pages. This means when an AI agent or a searcher asks about ‘commercial lending structures’ or ‘cross-border treasury solutions,’ the LLM is significantly more likely to summarize a third-party editorial than to cite the institution providing the actual service. Banks have outsourced their authority to publishers who have mastered the art of AI-ready content architecture.

Entity Clarity: The Knowledge Architecture Problem

Entity clarity scores showed the most extreme polarization in the dataset: 0 to 100. RBC, HSBC, and Goldman Sachs scored 100. HDFC Bank and Bank of America scored 25.

Entity clarity is about whether a page clearly communicates what it is, what entity it represents, and how that entity connects to the broader knowledge graph. It is not an SEO problem. It is a knowledge architecture problem – and it is one of the primary eligibility blockers in AI retrieval.

Financial institutions must now adopt Generative Engine Optimization strategies that recognize affiliates as essential visibility gatekeepers rather than purely lead-generation channels. But before any institution can build an effective GEO strategy, it needs to resolve entity clarity at the page level. If an AI system cannot confidently identify what a page represents, it will not cite it – regardless of the domain authority behind it.

I have written extensively on this in my entity-based SEO framework. The underlying principle applies directly to what this banking dataset reveals: structured entity definition is not optional infrastructure. It is a prerequisite for AI visibility.

AI Visibility Signals: The Universal Weakness

Every institution in this analysis scored between 20 and 40 on AI visibility signals. There were no strong performers. Not one.

This dimension measures the presence of structured reinforcement elements: FAQ schema, author signals, definition clarity, and other machine-readable trust indicators. These are the signals that tell AI retrieval systems: “this content is reliable, this entity is authoritative, this answer is citable.”

Their absence is not a competitive gap between institutions. It is a systemic blind spot across the entire industry. Advanced AI models are influencing how consumers find and interact with financial services, shifting the focus to helpful, structured content – like schema markup or formatted FAQs – that is contextually rich and delivered in real time. Banks are not producing this at any consistent scale.

What does implementation actually gain? Based on comparable enterprise transformations I have overseen, institutions that systematically implement structured AI visibility signals – FAQ schema, entity markup, author attribution, definition layers – typically see measurable improvements in AI citation rates within 60 to 90 days. In financial services, where a single product recommendation reaching an in-market consumer can carry significant lifetime value, that improvement compounds rapidly. A 10% to 15% increase in AI citation frequency across high-intent financial queries translates directly into awareness and consideration share gains that traditional SEO metrics never captured.

What does inaction cost? Prospective customers are asking AI which banks to trust, whether refinancing makes sense, and which credit products fit their situation, and those conversations are happening before they ever visit your website. Every month without structural AI visibility optimization is a month of discovery conversations your institution is not part of. For institutions managing millions of potential customer touchpoints annually, the compounding cost of that absence is high.

Structural Decay: The Silent Threat

Four institutions in this analysis showed patterns I classify as structural decay: Bank of America, Goldman Sachs, ICBC, and Morgan Stanley. This means fragmented content structure, unclear topic hierarchy, and weak relationships between content blocks – conditions that make it systematically difficult for AI systems to interpret intent and extract meaning.

Structural decay is particularly dangerous because it is invisible to traditional analytics. Traffic can appear stable while AI retrievability deteriorates. The SEO Maturity Model I use with enterprise clients specifically addresses this – because in large organizations, structural decay typically accumulates over years of decentralized content decisions, template migrations, and governance gaps rather than any single failure event.

The institutions showing decay in this dataset are not failing because of bad content. They are failing because of accumulated architectural inconsistency that traditional SEO governance never prioritized.

What This Means Strategically

The conclusion I draw from this data is not that banks perform poorly on AI visibility. The conclusion is that the entire industry is operating under an outdated assumption about how visibility works.

Financial institutions that adapt now will influence not just what customers see, but how customers think about the category – because AI answers are shaping financial literacy, decision-making, and product comparison at scale. The institutions that move first on structural AI readiness will not just win search citations. They will define the answers that shape consumer understanding of the category itself.

For SEO managers, Heads of Digital, and VPs at financial institutions, I want to be direct about what this requires. This is not a content refresh. It is not a schema implementation project assigned to a junior team member. It is a structural repositioning of how your website communicates with machine intelligence – and it requires the same strategic investment and executive alignment as any other core infrastructure decision.

As generative AI platforms replace traditional search engines, AI visibility is now a defining factor in brand discovery, particularly in financial services. The banks that treat this as an SEO side project will be outperformed – not necessarily by other banks, but by the third-party publishers and fintech’s that are building AI-optimized content ecosystems around financial products right now.

If your organization is ready to assess where it stands, the AI Search Readiness Audit is the right starting point. It surfaces exactly the structural gaps identified in this analysis and maps them to a prioritized remediation roadmap.

Key Takeaways

Structural eligibility – not ranking – determines AI visibility. The banks in this analysis are not failing at SEO. They are operating under a framework that no longer governs how AI retrieval systems make citation decisions.

Extractability is the critical gap. Content can exist and still be invisible. The gap between structural integrity scores and extractability scores in this dataset – most sharply illustrated by JPMorgan’s 75/38 split – shows that technical competence does not equal machine readability.

Entity clarity is a prerequisite, not a feature. Institutions scoring zero on entity clarity are not “partially optimized.” They are structurally ineligible for consistent AI retrieval, regardless of their domain authority.

AI visibility signals are a universal weakness. No bank in this dataset scored strongly. This is a systemic industry gap, and the institutions that close it first will establish durable competitive advantages that compound over time.

The cost of inaction is real and measurable. Discovery conversations are happening in AI environments before consumers reach any bank’s website. Every month without structural AI visibility remediation is a month of lost consideration that traditional analytics will not capture – until the revenue impact becomes impossible to ignore.

This research is part of an ongoing series examining AI visibility across major industry verticals. Previous studies covered Life & Health Insurance | World’s Largest Banks | Industrial Manufacturing | SaaS CRM | Automobile Industry | Truck & Commercial Vehicle Industry

Frequently Asked Questions

AI visibility analysis evaluates whether a website’s structure, content organization, and machine-readable signals enable AI retrieval systems to surface and cite that content in generated responses reliably. For banks, it matters because an increasing share of financial discovery decisions now happen inside AI-generated answers before a consumer reaches any institution’s website. If a bank’s digital infrastructure is not structurally eligible for AI retrieval, it is absent from those conversations entirely.

Brand authority and structural AI extractability measure different things. JPMorgan scored 75 on structural integrity but 38 on AI extractability. This gap exists because extractability is not about the quality or quantity of content – it is about whether AI systems can reliably parse, segment, and cite that content when constructing an answer. Pages can be technically sound and still be flagged as “partially extractable” if content blocks lack clear hierarchy, definition layers, or machine-readable structural signals.

Structural decay refers to accumulated architectural inconsistency across a website – fragmented topic hierarchy, weak relationships between content blocks, and unclear page-level intent signals. It is particularly common in large organizations where content decisions have been decentralized over time. For AI retrieval systems, structural decay makes it systematically difficult to interpret what a page is about or confidently cite it as an authoritative source.

Entity clarity measures whether a page clearly defines what it is, what entity it represents, and how that entity connects to the broader knowledge graph. Institutions scoring zero on entity clarity have pages that fail to communicate these fundamentals in a machine-readable way. It is a knowledge architecture problem – and it is a core eligibility blocker, meaning AI systems will not reliably cite those pages regardless of how strong the surrounding brand signal is.

Traditional SEO signals – backlinks, title tags, keyword density – are designed to influence search engine ranking algorithms. AI visibility signals – FAQ schema, author attribution, definition clarity, structured entity reinforcement – are designed to communicate trust, citeability, and interpretive clarity to AI retrieval systems. They serve overlapping but distinct functions, and most institutions in this analysis have invested heavily in the former while neglecting the latter entirely.

Based on enterprise implementations I have worked through directly, institutions that systematically implement core AI visibility signals – structured data, entity markup, FAQ schema, author signals – typically see measurable improvements in AI citation frequency within 60 to 90 days. The exact timeline depends on the scale of the remediation and how quickly changes are indexed, but the structural improvements themselves can be audited and validated before citation data confirms the impact.

The structural requirements are similar, but the content strategy differs. Business decision-makers often search for insights and guidance, not just product keywords – and AI systems respond to that by pulling from thought leadership, research, and structured explanations rather than product pages. B2B financial institutions need to optimize a different content layer, but the underlying structural eligibility requirements – entity clarity, extractability, AI visibility signals – apply equally.

Yes. The structural dynamics identified in this banking analysis are consistent with what I found in a separate AI visibility analysis of life and health insurance institutions. The industry-wide pattern – high structural integrity, low extractability, absent AI visibility signals – appears across financial services broadly, not just commercial banking.

Research Date: April 14, 2026 | Methodology: Ivica Srncevic Framework + AI Visibility Inspector

NOTE: This research is independent – not sponsored by any organization or legal entity!