Google’s In-SERP Browsing Definition

In‑SERP Browsing is the shift in user behavior where searchers consume information directly inside Google’s interface – AI Overviews, featured snippets, knowledge panels, carousels, and interactive modules – without visiting websites. It represents a structural change in how discovery works: Google becomes both the search engine and the destination. This shift reduces outbound clicks and fundamentally changes how brands must think about visibility, attribution, and content strategy.

Threat Components

The in‑SERP browsing threat is driven by five components:

- AI‑Generated Answers – Google provides full explanations, steps, and summaries without requiring a click.

- SERP Feature Expansion – modules like People Also Ask, carousels, and product panels absorb user intent.

- Zero‑Click Interactions – users get what they need without leaving the results page.

- Content Substitution – Google synthesizes information from multiple sources, reducing the need to visit any.

- Traffic Redistribution – visibility shifts from websites to Google’s own interface and AI systems.

These components collectively reduce organic traffic even when rankings remain strong.

What This Shift Means for Brands

In‑SERP browsing forces brands to optimize for visibility inside Google’s answer layer, not just in traditional rankings. It requires stronger entities, clearer semantic structure, and content designed for extraction and reuse. Brands that adapt will maintain presence even when clicks decline; brands that don’t will become invisible despite “good rankings.”

Google’s In-SERP Browsing Is Not a Feature

Every major shift in search has redefined who owns the user relationship. This is the first time that ownership is being structurally retained by the search interface itself. If your analytics still define reality, you are measuring a version of search that no longer exists.

Google’s in-SERP browsing is not a product update. It is a structural redefinition of what a click, a visit, and a search session actually mean – and the implications for enterprise organic traffic are more serious than most SEO managers and digital leaders have yet acknowledged.

I have spent 25 years inside this industry, most of them inside global enterprise organisations. I watched Google become the dominant gateway to the web. And now I am watching Google quietly dismantle the deal it made with publishers to build that dominance. What I am describing in this article is not speculation. It is the logical endpoint of a strategy that has been accelerating for years – and it demands a clear-eyed response from every enterprise team that depends on organic search for revenue.

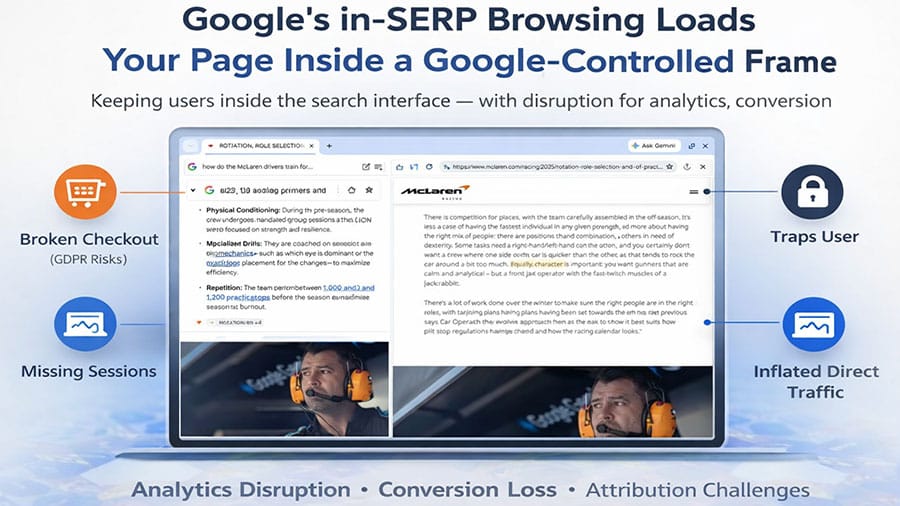

Let me be direct about what is happening. Google has begun rolling out a new browsing experience, currently in phased deployment, with uneven visibility across markets and devices, that loads pages inside a Google-controlled frame within the search interface, allowing users to switch between results without ever leaving the SERP. Users are technically on your website, but in practice, still within Google’s environment. The boundary between the search engine and destination website has collapsed.

This is the most consequential structural shift in organic search since the invention of the blue link.

What Google Has Actually Built – And What It Means for Your Traffic

The mechanics of this new interface are worth understanding precisely, because the boardroom implications follow directly from the technical reality. When a user clicks a search result under this model, they encounter a persistent Google header, a split-view or overlay layout, and the ability to jump back to results instantly, and to browse multiple results without ever leaving Google’s controlled environment.

This is not a UX experiment. It is a deliberate strategic move, and it has three consequences that enterprise leaders need to understand immediately.

First, your analytics will misreport reality. When Google renders your page inside its own frame, your analytics scripts may not fire reliably. You will see missing sessions, broken referrer chains, and inflated direct traffic figures. Your Google Search Console may report a click that your analytics never recorded as a session. If your leadership team is making decisions based on organic traffic data right now, that data may already be partially wrong.

Second, your conversion infrastructure may be breaking silently. Some checkout systems cannot function inside iframes. Some payment providers block framed environments for security reasons. Cookie consent flows and privacy notices may not display correctly. And even where the technology functions, the user psychology shifts. When someone feels they are still inside Google, your brand’s authority and conversion intent both decline. The CTA that was designed to anchor your customer journey now competes with a persistent Google header at the top of the screen.

Third, the attribution problem extends beyond analytics into legal compliance. If your site’s consent banner does not fire correctly inside a Google-controlled frame, your GDPR and ePrivacy compliance may be compromised – without your knowledge, and without a clear audit trail.

The cost of not addressing this is concrete. If your enterprise currently drives 40% of early-funnel traffic through informational organic queries, which is typical for B2B and high-consideration B2C, model downside scenarios where a substantial portion, potentially the majority, of informational traffic generates no measurable sessions. The conversion and attribution loss that follows is not recoverable through standard SEO optimisation.

What Is Confirmed – And What Is Still Emerging

Because this development is being rolled out in phases and is not yet uniformly visible across all markets, it is important to distinguish between observed behaviour and forward-looking implications.

Confirmed and observable today:

- Google is increasingly rendering and presenting third-party content within its own interfaces (e.g. AI Overviews, embedded browsing experiences).

- Organic click-through rates are declining across multiple query categories.

- A growing share of searches end without a click to an external website.

- Discrepancies between Google Search Console clicks and analytics sessions are increasing in some environments.

Emerging and still evolving:

- The full extent to which Google-controlled browsing frames affect analytics tracking and conversion infrastructure.

- The consistency of behaviour across devices, browsers, and regions.

- The long-term impact on attribution models and marketing automation systems.

- The legal and regulatory response across different jurisdictions.

The analysis in this article connects these observed patterns into a structural interpretation of where search is heading. As with any evolving system, specific implementations may change – but the underlying direction is already visible.

The Asymmetry Google Created – And Why It Matters Legally and Ethically

For two decades, Google enforced a set of rules on the web that it is now violating itself. The X-Frame-Options header, which tells browsers not to load a page inside a frame, was respected by every compliant web environment. Chrome, which Google controls, now appears to bypass or reinterpret standard framing protections within Google-controlled experiences. Google penalised websites for preventing users from leaving easily. Google’s own interface now makes it structurally difficult for users to leave Google. Google built its authority on the promise that publishers who allowed Googlebot to crawl their sites would receive visitors in return. That deal has changed.

This matters beyond the ethical dimension. It creates legal exposure across multiple jurisdictions. The conduct touches copyright boundaries, fair use limits, framing without consent, anti-competitive behaviour, and abuse of combined browser and search market dominance. The EU’s Digital Markets Act creates a specific framework for exactly this kind of conduct. The DOJ antitrust case against Google has already established a context where publishers’ claims about irreparable traffic harm are being taken seriously in court.

I am not predicting outcomes, and nothing in this article constitutes legal advice. I am pointing out that this legal and regulatory pressure is real, it is accelerating, and enterprise brands should factor it into their strategic posture, in consultation with their legal teams, and including decisions about how they structure their crawl access permissions.

The Data Behind the Decline You Are Already Seeing

If your organisation is experiencing the pattern of rising impressions, stable rankings, declining clicks, and collapsing sessions, this is why. The structural data is now clear. Research analysing over 16,000 queries from January 2025 to January 2026 shows that organic click share declined between 11 and 23 percentage points depending on vertical. The headphones category fell from 73% to 50% organic click share in a single year. More than 60% of Google searches now end without a click to a website. For news-related queries, that figure reached 69% by May 2025.

The in-SERP browsing development accelerates a trend that AI Overviews and AI Mode already created. Organic CTR for queries with AI Overviews dropped from 1.76% to 0.61% according to Seer Interactive’s September 2025 research – a 65% decline. The in-SERP browsing frame compounds this by capturing even the clicks that do occur, keeping the session inside Google’s controlled environment rather than transferring it to the publisher.

There is a nuance worth noting for enterprise leaders. The brands that are cited inside AI Overviews and AI Mode summaries see 35% higher organic CTR and 91% higher paid CTR than brands that are not cited on the same queries. This is not a coincidence. It reflects the way authority, entity recognition, and citation frequency are becoming the primary determinants of visibility in an AI-mediated search environment.

This is the strategic fork. Your enterprise either builds the kind of authority that earns citation in AI-generated responses, or it watches its organic funnel contract quarter by quarter.

The Chrome Dependency: The One Structural Weakness in Google’s Model

Every enterprise SEO strategy should include a clear understanding of Chrome’s role in enabling this model. Google’s in-SERP browsing relies entirely on Chrome’s ability to inject UI elements into third-party pages and override standard web security headers. This behaviour appears tightly coupled to Chrome’s environment and is not consistently reproducible across other browsers. It cannot trap users inside the SERP. It must behave like a traditional search engine.

This introduces a strategic variable that enterprise teams have never had to model before: browser market share as a component of organic traffic risk. If Chrome’s market share declines, Google’s ability to execute this capture strategy weakens proportionally. Perplexity’s release of its own AI-first browser Comet in July 2025, and reports of OpenAI developing a competing browser, signal that the browser layer is becoming a contested competitive space for the first time in years.

For enterprise teams, this means monitoring browser distribution in your analytics data is no longer just a technical hygiene task. It is a proxy indicator for the structural risk this model poses to your organic traffic.

The impact of this shift becomes visible only when you track whether your content is still being used, which is exactly what NovaX measures beyond traditional traffic metrics.

What the Strategic Response Looks Like

The response to this shift operates at three levels: measurement, content architecture, and channel diversification.

Measurement comes first. Before your organisation can respond strategically, it needs accurate data. The standard GA4 plus GSC stack is no longer sufficient on its own. You need to separate branded from non-branded query performance. You need to track AI Overview citation frequency for your target queries. You need to monitor referrer data for signs of framing-related session loss. And you need to build a share-of-voice model that captures visibility in AI-generated responses across Google, Bing, Perplexity, and ChatGPT – not just traditional SERP rankings.

If your team is still presenting organic performance to leadership as a traffic and ranking report, you are measuring a system that no longer exists in the form you are measuring it. I have written about this problem in detail in Measuring Visibility in the Age of AI Search and The Death of the Organic Click as a KPI.

Content architecture determines citation eligibility. The brands that survive this structural shift are the ones that earn citations in AI-generated responses. That requires a specific type of content: structured, entity-rich, clearly attributed, semantically precise, and built on a foundation of genuine expertise signals. Generic, mass-produced content loses in this environment. Demonstrated authority on specific topics, with a consistent content structure that AI systems can extract and attribute, wins.

This connects directly to entity-based SEO and authority engineering – both of which have become essential components of enterprise visibility strategy, not optional advanced tactics.

Channel diversification is now a risk management requirement. Relying on Google as your primary organic discovery channel is no longer a neutral strategic choice. It carries a concentration risk that your organisation’s risk management frameworks should be quantifying. Bing still sends real, attributable clicks. Perplexity is growing as a discovery layer for high-intent research queries. ChatGPT browsing reaches 800 million weekly users. Direct traffic, owned channels, and email remain the most reliable conversion-path assets you control. Blocking Googlebot was unthinkable three years ago. For some publishers in heavily informational categories, it is now a strategically rational option worth modelling, particularly where the crawl is generating zero reciprocal visit value. The calculation differs significantly by organisation size and Google dependency. For large enterprises with Google embedded in paid media, Shopping, and Maps alongside organic, partial crawl restrictions or snippet controls are a more practical starting point than full Googlebot exclusion. The principle is the same: the value exchange should be explicit, not assumed.

The gain from implementing this strategic framework is significant. Enterprises that shift their measurement to share-of-voice and citation frequency, build content architecture optimised for AI retrieval, and diversify their discovery channels, consistently maintain organic-driven pipeline contribution even as traditional click volume declines. The organisations I work with that have made this shift report that conversion quality from AI-cited traffic exceeds what standard organic clicks deliver, because the user arrives with higher intent and greater pre-qualification.

The cost of not implementing it is structural pipeline erosion. If you wait until the click collapse is visible in your revenue dashboard, you are already six to twelve months behind the inflection point where the response would have been most effective.

What This Means for Marketing Automation, Paid Media, and SEO Execution

The implications of in-SERP browsing extend beyond organic traffic. They fundamentally disrupt the operating assumptions behind marketing automation systems, paid media optimisation, and how SEO teams prioritise their work.

Marketing Automation: The Top of Funnel Is Disappearing

Most enterprise marketing automation systems are built on a simple premise: that anonymous users arrive on your website, enter the funnel, and can then be tracked, scored, and nurtured over time. That premise is breaking.

If users consume your content inside a Google-controlled environment without triggering a measurable session, they never enter your marketing automation system at all. There is no cookie, no behavioural profile, no lead scoring signal, and no retargeting eligibility. The practical consequence is not just reduced traffic. It is a shrinking addressable audience within your own systems.

Your CRM and marketing automation platform will increasingly reflect only mid- and bottom-funnel users, creating a distorted view of demand generation performance. Early-stage influence, historically driven by informational organic content, becomes invisible.

This has two implications for enterprise teams. First, attribution models that rely on multi-touch journeys degrade in accuracy. Second, the role of owned channels such as email, direct traffic, and authenticated user environments becomes more important, because they are among the few environments where user identity and behaviour can still be reliably captured.

Paid Media: CPC Inflation and Retargeting Erosion

As organic visibility converts into fewer measurable visits, the pressure shifts directly into paid channels.

When users remain inside Google’s interface, paid placements become one of the only reliable ways to drive a fully controlled visit. This creates a structural upward pressure on cost-per-click, particularly in informational and mid-funnel query categories that historically relied on organic discovery.

At the same time, retargeting pools begin to degrade. If fewer users are recorded as site visitors, fewer users can be added to remarketing audiences. This reduces the efficiency of lower-funnel paid campaigns, which depend on warm audiences for conversion.

The result is a double impact: higher acquisition costs at the top of the funnel, and lower efficiency at the bottom. Enterprise paid media teams should expect to see:

- rising CPCs on non-branded queries

- shrinking remarketing audience sizes

- declining return on ad spend for retargeting campaigns

This is not a campaign optimisation issue. It is a structural change in how users enter, or fail to enter, your measurable ecosystem.

SEO Execution: From Traffic Acquisition to Citation and Influence

SEO teams need to adjust not just tactics, but their definition of success.

In a model where clicks do not reliably translate into sessions, optimising for rankings and traffic volume becomes insufficient. The objective shifts toward visibility within AI-generated responses and influence before the click occurs. This changes how SEO work is prioritised.

Content is no longer only a traffic acquisition asset. It becomes a source document for:

- AI summaries

- entity association

- brand citation

Technical SEO remains critical, but its role expands from crawlability and indexing toward ensuring that content can be reliably parsed, extracted, and attributed by AI systems.

Internal linking, content structure, and semantic clarity are no longer just ranking factors. They are retrieval signals.

The most effective SEO teams will operate less like traffic acquisition units and more like visibility engineering functions, optimising for presence across search interfaces, AI systems, and discovery layers, not just traditional SERPs.

The Operational Reality

Taken together, these changes create a feedback loop inside enterprise marketing systems.

Organic traffic declines reduce the inflow of new users into marketing automation platforms. This weakens retargeting pools, increasing reliance on paid acquisition. Rising paid costs then force stricter performance expectations, which are harder to meet due to degraded attribution and tracking.

Without a structural adjustment to measurement and strategy, this loop leads to a gradual erosion of marketing efficiency across channels.

This is why the response to in-SERP browsing cannot sit within SEO alone. It requires coordination across SEO, paid media, analytics, and marketing operations teams – and, critically, alignment at the leadership level on how performance is measured and valued.

The Strategic Question Google Has Created for Every Enterprise Publisher

The question that enterprise leadership teams need to answer is this: if Google takes your content, uses it to answer queries, keeps the user, prevents the visit, breaks your attribution, and collapses your funnel, what are you gaining by allowing unrestricted crawl access?

This is not a rhetorical provocation. It is a genuine strategic calculation that is now available to every enterprise SEO team for the first time. The answer will differ by industry, by traffic dependency, and by the strength of your alternative discovery channels. But the calculation needs to happen explicitly, not be avoided because the question feels uncomfortable.

I have covered the alternative search ecosystem and why Google is no longer the only door into organic discovery in more depth at Google Is No Longer the Only Door Into Organic Discovery. The argument has become significantly more urgent since that article was published.

Key Takeaways

- Google’s in-SERP browsing loads your pages inside a Google-controlled frame, keeping users inside Google’s environment even after they click your result. Your brand, your CTA, and your conversion infrastructure all operate at a disadvantage inside that frame.

- Your analytics data is already partially broken. Missing sessions, broken referrers, and inflated direct traffic are symptoms of framing-related attribution loss. The GSC-to-analytics discrepancy you may be seeing is not a tagging error; it is a structural reporting break.

- The legal and regulatory exposure is real. Framing without consent, anti-competitive conduct, and copyright extraction are all areas where this behaviour intersects with existing enforcement frameworks, particularly the EU Digital Markets Act and the ongoing DOJ antitrust case.

- Chrome is the enforcement mechanism. In any non-Google browser, this model does not work. Browser market share is now a component of your organic traffic risk model.

- The brands that remain visible in this environment earn citation inside AI-generated responses. That requires entity authority, structured content, and genuine expertise signals, not volume-based content production.

- Channel diversification is now a risk management requirement. The concentration risk of a Google-only organic strategy is quantifiable, and your leadership team should be quantifying it.

- The strategic calculation around Googlebot access has changed. Modelling the value exchange between crawl permission and traffic reciprocity is now a legitimate enterprise decision, not an extreme position.

Work With Me

If your enterprise is navigating this shift and your current measurement framework, content architecture, or channel strategy was built for a search environment that no longer exists, I can help you build one that works in the environment that does.

I work with SEO Managers, Heads of Digital, VPs, and C-suite leaders at enterprise organisations to translate structural search changes into clear strategic decisions, with the commercial rigour that internal teams and boards require.

Start with the Search Visibility Diagnostic to understand where your organisation’s risk is concentrated. Or get in touch directly if you want to discuss your specific situation.

Frequently Asked Questions

In traditional search, clicking a result opens the destination website as a standalone page in a new tab or the current window. Google’s in-SERP browsing instead loads the destination page inside a Google-controlled frame within the search interface. The user never fully leaves Google’s environment. They can switch between results, return to the SERP instantly, and continue the search session entirely inside Google’s interface, without the destination site ever functioning as a true standalone visit.

Not reliably. Depending on how Google renders the page inside its frame, your analytics tracking scripts may or may not fire. The result is missing sessions, broken attribution chains, and inflated direct traffic in GA4. Google Search Console may still report a click, creating a growing discrepancy between GSC click data and GA4 session data. This discrepancy is not a tagging problem; it is a structural reporting break caused by the framing model itself.

The X-Frame-Options and Content-Security-Policy headers exist precisely to prevent framing, and Chrome, under Google’s control, can override them within Google’s own interface. In any other browser, these headers would prevent your site from being loaded inside a frame. Chrome’s ability to bypass this security mechanism is central to why Google’s in-SERP browsing works at all, and why it represents a unique concentration of power that no other search engine possesses.

Directly and severely in some cases. Payment providers often block framed environments as a security measure. Checkout flows that rely on session context or full-page environments may break silently. Cookie consent banners and privacy notices may not display correctly, creating GDPR compliance risk. And even where the technology functions, conversion intent is lower when users feel they are still inside Google’s environment rather than on your brand’s domain.

Yes, for some organisations, significantly. The traditional calculation was straightforward: allow Googlebot to crawl your site, and Google sends you visitors. That exchange is now asymmetric. Google extracts content value, Google’s AI systems use it to answer queries, and fewer users arrive at your site as a result. For enterprises in heavily informational categories where Google’s in-SERP browsing captures even the clicks that do occur, modelling the actual value exchange of crawl access is no longer a radical exercise; it is a legitimate strategic decision.

Any browser that is not Chrome. Firefox, Safari, Brave, and Edge all respect standard framing security headers. In these browsers, Google cannot load your site inside its own interface. It must send users to your site as a genuine visit. This is why Chrome’s market share is now a component of your organic traffic risk model, not just a technical analytics consideration.

Shift your primary measurement from traffic volume to share of voice and citation frequency. Track how often your brand and content are cited in AI Overview responses for your target queries. Monitor branded search volume as a proxy for awareness and authority. Measure conversion quality from different traffic sources rather than volume alone. Build a visibility model that spans Google, Bing, Perplexity, and ChatGPT rather than relying exclusively on GSC data. This is the measurement architecture that reflects how search actually works in 2026.

It affects every organisation whose customer journey includes an organic search touchpoint – which includes virtually every B2B enterprise with a content-driven demand generation model. The conversion risk is in many ways more acute for B2B, where long research cycles, multiple decision-makers, and high-consideration purchasing decisions make every touchpoint in the early funnel commercially significant. If your enterprise uses informational content to build a pipeline and pre-qualify buyers, the in-SERP browsing model threatens the integrity of that entire funnel.