Table of contents

- Note On Methodology

- The Decision Most SEOs Would Never Make

- Why the Old Website Had to Go

- The Architecture: Built for Understanding, Not Decoration

- Website Clustering: Living Systems, Not Theoretical Diagrams

- Content Philosophy: Diagnostic, Not Generic

- Entity Signals: Consistent, Clear, and Cross-Platform

- Technical Health: Clean, Stable, and Fully Compliant

- The Global SERP Footprint: Page-1 Rankings Across Continents

- The Estimated Value of Getting This Right

- Why This Worked: The Diagnostic Explanation

- What I Learned From Rebuilding From Zero

- Methodology

- What Comes Next: The Path to Global Authority

- Frequently Asked Questions

Note On Methodology

This rebuild was executed entirely according to established SEO and content best practices. No shortcuts, artificial amplification, automated content production, or trend-driven tactics were used, just ethical authority engineering.

The objective was not to “game” search systems but to build a clean, authoritative foundation from the first URL: clear architecture, expert-level content, coherent internal linking, and consistent entity signals across platforms.

In an industry increasingly distracted by GEO hype, AI-generated noise, and superficial optimisation tricks, this project intentionally focused on fundamentals done properly. The results documented in this case study reflect what happens when those fundamentals are applied consistently from the start.

The Decision Most SEOs Would Never Make

I deleted my entire website. Every URL, every article, every template, every piece of content – gone. Four years of accumulated work were wiped out completely. Most SEO professionals would call that professional self-destruction. I called it the only logical decision.

SrnaSEO.com had existed for nearly four years, but the old structure no longer reflected how I think, how I work, or how I advise enterprise clients. The architecture was rigid, the content had drifted from my actual frameworks, and the site had accumulated layers of structural noise that no amount of patching would fix. The honest diagnosis was clear: the foundation was wrong. You cannot build a global advisory platform on a broken base.

So I applied the same diagnostic discipline I bring to enterprise SEO engagements. I stopped optimising the wrong system and built the right one instead.

What happened next surprised even me. Within 40 days of the rebuild, the site reached page one of Google across Europe, Asia, and Oceania. Multiple pages achieved positions between #1 and #4 in competitive industrial and digital markets – Hong Kong, Sweden, Singapore, China, and the Netherlands, among them. This happened while only 36–40% of the site was indexed. Google had discovered roughly 70% of the URLs, but more than half the articles were still waiting to enter the index. Despite that, the site was already outperforming organisations that have been in those markets for decades.

This case study explains what was done, why it worked, and what the early trajectory reveals about how modern search systems evaluate expertise, architecture, and entity clarity.

Why the Old Website Had to Go

The previous version of SrnaSEO.com was built entirely in static HTML. Fast, yes – but completely rigid. Editing was slow and manual. The site functioned more like a digital business card than a living advisory platform. There was no CMS, no structured content system, no semantic clustering, and no efficient way to expand into a research ecosystem.

Rigidity is the enemy of growth. Speed without scalability is not a competitive advantage – it is a ceiling.

I made the decision to replace it with a clean WordPress installation rebuilt from absolute zero. This was not a migration. It was not a redesign. It was a full reset. New CMS, new templates, new content, new URL structure, new semantics, new internal linking, and a new identity. Google had to forget the old version and learn the new one entirely from scratch, which makes the early results even more significant.

The cost of staying on the old system? Continued invisibility. Every month of structural noise is a month of delayed authority. The opportunity cost of not rebuilding was larger than the short-term disruption of resetting.

The Architecture: Built for Understanding, Not Decoration

The rebuild intentionally ignored short-term SEO trends and focused on fundamentals: architecture clarity, semantic precision, expert-level content depth, and entity consistency.

The first step in the rebuild was designing an architecture that Google could interpret instantly. I wanted a system where every page had a purpose, every category had a role, and every internal link reinforced a semantic cluster. No plugin bloat, no theme pollution, no unnecessary JavaScript, no duplicated templates.

The site was built around:

- A clear homepage that defines the entity

- A strong About page that reinforces expertise

- A Research section that signals depth

- Diagnostic articles that demonstrate topical authority

- Clean URLs and metadata

- Zero cannibalization

- Zero structural noise

Most organisations underestimate this step. Architecture is not about menus and breadcrumbs. It is about how a search engine understands your identity. When Google sees a coherent structure, consistent semantics, and a clear topical boundary, it does not treat the domain like a random blog. It treats it like a potential authority.

That is why my homepage, About page, and Research page all ranked between positions 2.3 and 4.2 globally within the first month. Google was not guessing. It understood the entity.

If you are currently managing an enterprise site with years of accumulated structural decisions layered on top of each other, I encourage you to read my work on structural decay in enterprise SEO – the patterns are predictable and the cost of inaction compounds over time.

Website Clustering: Living Systems, Not Theoretical Diagrams

The structural design of the rebuilt site is intentionally minimalistic, clean, and semantically coherent. Every page that is meant to rank – whether a diagnostic article, a research asset, a category hub, or a service page – sits within a clear cluster and connects internally in a way that reinforces both topical relevance and crawlability.

Instead of relying on bloated navigation systems or artificial link modules, the internal linking strategy lives directly inside the content. Each page carries a minimum of five to seven contextual internal links, all pointing toward closely related pages within the same semantic family.

The result is a website where clusters function as living systems. Each cluster has a central anchor page, surrounded by supporting diagnostic articles, research notes, and category hubs that strengthen the semantic boundary. The internal links form a network dense enough for Google to understand the relationships, yet clean enough to avoid cannibalization or structural noise.

Every page contributes to the authority of its cluster. Every cluster contributes to the authority of the entire domain. This is not a metaphor – it is measurable in how quickly Google indexed the representative sample and began assigning ranking positions.

For a deeper framework on how to build this kind of architecture, my semantic cluster blueprint walks through the structural logic in full diagnostic detail.

Content Philosophy: Diagnostic, Not Generic

I did not publish generic SEO tutorials. I published the frameworks I actually use in enterprise advisory work:

- International SEO & GEO Strategy

- Semantic Cluster Blueprint

- Indexation and Crawl Diagnostics

- Visibility Strategy

- AI Search Readiness Audit

- SEO Maturity Model

These concepts do not appear on mass-market SEO blogs. They are diagnostic frameworks that fill real gaps in the industry. When you publish something that does not exist elsewhere, you are not competing – you are defining the category.

Every article is written in long form, with depth, clarity, and a senior advisory tone. The content is grounded in more than 25 years of experience across startups, SMEs, and global enterprise organisations including Atlas Copco and the Adecco Group. Each page carries clear definitions, consistent terminology, and a structured approach that helps both users and search systems understand the underlying concepts.

The financial argument for this approach is straightforward. Generic tutorial content competes in the most crowded space of the internet and rarely converts at the enterprise level. Diagnostic, expert-driven content attracts the decision-makers who are already aware they have a problem – and who have the authority to engage an advisor. One well-targeted diagnostic article that converts a VP of Digital is worth more than ten thousand generic blog visitors.

The cost of not publishing at this depth? Invisibility among the buyers who matter. Generic content is not a neutral choice – it is a signal to Search Engines and to your ideal client that your thinking is not differentiated.

Entity Signals: Consistent, Clear, and Cross-Platform

Entity signals were crafted intentionally across the entire website and beyond. My identity, expertise, and frameworks are reinforced not only on srnaseo.com but also across LinkedIn, Medium, and other platforms. This cross-platform consistency prevents confusion for both Google and large language models, giving search systems a unified picture of the entity behind the content.

On the website itself, every page reinforces the same identity. The About page, Research page, author information, terminology, and internal linking all work together to strengthen the entity signal. This is one of the primary reasons Google was able to trust the new version of the site so quickly despite the full reset.

When your identity, terminology, and frameworks are aligned everywhere Google looks, the system does not need months to form a view. It forms one quickly – and it forms the right one.

This matters significantly in the current search environment. As I covered in my piece on AI search readiness, LLMs and AI-powered search engines retrieve from entities they can reliably identify. Ambiguity is not neutral – it is a retrieval risk. Clear, consistent entity signals are now a strategic asset, not an optional detail.

Technical Health: Clean, Stable, and Fully Compliant

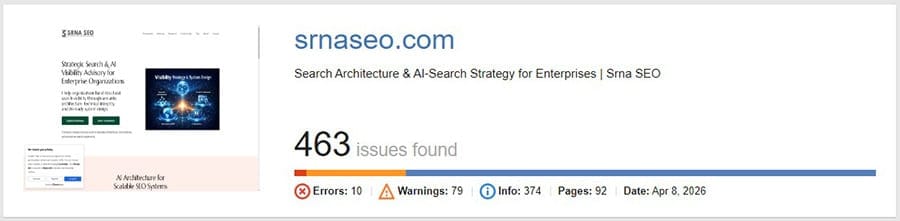

From a technical standpoint, the rebuilt website performs exceptionally well. A full audit surfaced only 10 errors, all limited to duplicate titles and meta descriptions on paginated category pages. Nothing more. These are non-critical issues that arise naturally when categories span multiple pages and carry no negative impact on crawlability, indexation, or ranking performance.

The audit also surfaced 46 warnings, most relating to longer page titles or extended meta descriptions. These are intentional – longer titles preserve semantic clarity and communicate the diagnostic nature of the content.

What the audit did not find matters more: no critical errors, no broken templates, no indexing blockers, no JavaScript rendering failures, no mobile usability issues, no Core Web Vitals regressions, and no structural inconsistencies.

On performance, the desktop metrics reflect the priorities of the audience. Business professionals and enterprise buyers predominantly work from desktop. The site achieves a First Contentful Paint of 0.7 seconds, a Largest Contentful Paint of 0.9 seconds, a Total Blocking Time of 40 ms, a Cumulative Layout Shift of 0.031, and a Speed Index of 0.7 seconds. The page is visually open in under one second on desktop.

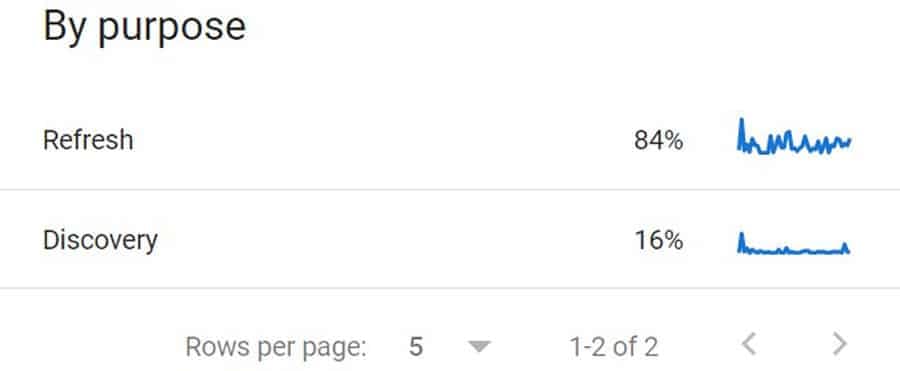

Average server response time sits at 240 ms. Approximately 98% of crawled pages return a 200 status code. The crawl distribution shows 84% refresh crawling and 16% discovery – exactly the pattern I would expect at this stage of a rebuild: Google validating the new structure while gradually exploring the remaining URLs.

If your enterprise site carries a heavy backlog of technical debt, my technical SEO risk management framework offers a prioritisation approach grounded in actual impact rather than tool-generated severity scores.

The Global SERP Footprint: Page-1 Rankings Across Continents

The most significant result was not that the site ranked – it was where it ranked.

Tier 1 Markets (Positions 1–4): Hong Kong, Sweden, China, Singapore, Netherlands, New Zealand, and others. In only 40 days! These are competitive industrial and digital markets with strong SEO ecosystems. Rankings at positions 1–4 here signal algorithmic confidence, not early-stage testing.

Tier 2 Markets (Positions 5–12): Czechia, Vietnam, India, Finland, Ireland, Japan, South Korea, Australia, Malaysia, Spain. These markets reflect Google testing the domain on page one while waiting for additional authority signals before pushing into the top three.

Tier 3 and 4 Markets (Positions 13–40+): Germany, US, UK, Canada… These represent the most competitive SEO ecosystems in the world. Breaking into the top 20 within 40 days is ahead of any reasonable schedule for a domain rebuilt from zero.

At the page level, the performance distribution tells a clear story:

| Page | Average Position |

|---|---|

| Homepage | 2.3 |

| About | 2.6 |

| Research | 4.2 |

| Technical SEO | 4.7 |

| Portfolio | 4.5 |

| Category pages | 5.4 |

| Contact | 6.0 |

| Testimonials | 7.6 |

Even deep pages – portfolio items, terms of service, and privacy policy – are ranking. The system is being evaluated holistically, not page by page.

At the query level, Google is ranking the site for concepts I introduced to the industry:

| Query | Position |

|---|---|

| “crawl constraints” seo | 1.5 |

| semantic blueprint | 4.6 |

| international cannibalization | 6.5 |

| ai readiness audit in seo | 8.5 |

| seo mentorship program | 18.8 |

This is how thought leadership becomes search leadership. When you define the vocabulary of a field, the search system has no competitor to weigh your content against.

Early Traffic Signals

Search rankings were not the only early signal that the rebuilt system was being discovered. Between February 24, when the new website went live, and the end of March, total traffic increased approximately four times compared to the previous baseline.

What makes the distribution particularly interesting is the channel mix. Direct traffic accounted for roughly 46.7% of visits, followed by 33.3% classified as unassigned, 13.3% from organic search, and 6.7% from referral sources. For a newly rebuilt site with only 36–40% of its URLs indexed, this pattern suggests that discovery is occurring through more than traditional search engine results.

Direct and unassigned traffic frequently include visits originating from environments where referral information is not consistently passed to analytics systems. This can include links opened from AI assistants, embedded research tools, messaging platforms, or other privacy-protected contexts. As AI-mediated discovery becomes more common, it is increasingly normal for a portion of this traffic to appear in these categories rather than under classical referral or search channels.

In practical terms, this means the site is already being surfaced in research and discovery environments before the search index is fully populated. The indexed articles act as an entry point, while the broader content system gradually becomes visible as Google expands its coverage.

This pattern also highlights a broader industry blind spot. Many corporate websites still design their content primarily for classical search rankings while ignoring the environments where discovery increasingly begins – AI assistants, research tools, and conversational interfaces. When content lacks clear structure, deep expertise signals, and well-defined frameworks, these systems struggle to retrieve it reliably. As a result, organisations may unintentionally forfeit a significant share of potential discovery traffic long before it ever reaches the search results page.

The Estimated Value of Getting This Right

The financial stakes of authority engineering are significant and measurable. For an enterprise organisation operating in competitive international markets, a shift from page two to positions 1–4 across multiple markets typically generates a 30–60% increase in qualified organic traffic. At an enterprise conversion rate, even a modest improvement in organic lead quality can translate into millions in incremental pipeline value annually.

For an independent advisory practice, the conversion dynamic is different, but the leverage is equally real. A single page-1 ranking for a high-intent advisory query – “enterprise SEO consultant,” “international SEO strategy,” “SEO governance framework” – represents direct access to decision-makers who are actively searching for the expertise you offer. The commercial value of that visibility, compounded over 12 to 24 months, exceeds what most organisations spend on paid search in a year.

The cost of not implementing this model is equally concrete. Every month a site operates with structural noise, semantic cannibalization, or entity ambiguity is a month of delayed trust-building with search systems. In competitive international markets, that delay is not recoverable without a substantial investment of time and expertise. The organisations that are ranking in those Tier 1 markets today built their structural clarity months or years before you decided to compete.

If you are responsible for organic visibility at an enterprise organisation and you are not confident that your current architecture would survive the diagnostic standard I applied here, that gap is worth examining. I work with SEO Managers, Heads of Digital, and C-suite executives who need an independent view of whether their SEO foundation is built to scale – or to stall.

Why This Worked: The Diagnostic Explanation

This was not luck. It was the convergence of factors that modern search systems are specifically designed to reward:

Expertise over inherited authority. Google trusted the content even when the domain carried no legacy authority signals. The depth and diagnostic nature of the content was sufficient.

Clean architecture. Search Engines could understand the site’s structure, intent, and topical boundary immediately. There was nothing to interpret, second-guess, or penalise.

Semantic depth. Clusters were built intentionally, with every internal link serving a structural purpose rather than a superficial one.

Diagnostic content. Frameworks and original concepts outperform tutorials in both ranking and conversion. They attract the right reader and repel the wrong one.

Entity clarity. The homepage, About page, and Research page worked together as a coherent entity triangle. Google did not need to guess who I am or what I represent.

Zero cannibalization. Every article had a unique purpose, a unique semantic boundary, and a unique audience intent. No page competed against another. My framework on semantic cluster governance covers this in detail.

Consistent publishing. Content was released steadily and intentionally, not in bursts that create crawl pressure followed by silence.

This is what authority engineering looks like in practice – not as a theory, but as a sequence of measurable decisions that produce a predictable outcome.

What I Learned From Rebuilding From Zero

Rebuilding a website from absolute zero after four years of existence is not only a technical exercise. It is a diagnostic one. It forces you to confront your assumptions about what matters, what does not, and what truly drives authority in modern search systems.

The most important lesson: clarity always wins. When you remove noise, remove legacy decisions, remove outdated structures, and remove everything that no longer reflects your expertise, what remains is a system that Search Engines can understand instantly. A clean structure, consistent semantics, and intentional internal linking do more for early authority than any backlink campaign ever could.

I also learned that Google responds far more quickly to genuine expertise than most practitioners believe. Even with only 36–40% of the site indexed in the first 40 days, the indexed content carried the entire domain to global page-1 rankings. The search system is sophisticated in detecting expertise. The early rankings were not coincidental – they were a direct reflection of the depth, clarity, and consistency of the content.

Entity consistency proved more powerful than I anticipated. When your identity, terminology, and frameworks align across your website and across external platforms, Google and LLMs form a confident view of the entity behind the content. This cross-platform reinforcement accelerates trust. It also makes your content more resilient to algorithmic changes, because the system understands who you are, not just what keywords you used. This is especially relevant given how quickly AI-driven search is changing the organic discovery landscape.

Finally, deleting everything is sometimes the right decision. Most organisations try to fix broken systems by adding more layers on top of them. But sometimes the only way to build something clean, scalable, and future-proof is to start over. Authority is not inherited – it is engineered. When you engineer it intentionally, the results arrive faster than expected.

Methodology

All data in this case study was collected directly from Google Search Console, PageSpeed Insights, server logs, and internal analytics. Rankings were evaluated using Search Console’s average position metrics, supported by manual verification across multiple regions. Crawl data was drawn from the Crawl Stats report, including crawl requests, response codes, crawl purpose distribution, and average server response times. Indexing data was based on the Coverage report, focusing on discovered URLs, indexed URLs, and the distribution of “Crawled – currently not indexed” and “Discovered – currently not indexed” states.

Content analysis was based on my own writing process – long-form, diagnostic, expert-level articles grounded in more than 25 years of experience. Each article was written manually, without AI generation, and structured around clear definitions, frameworks, and semantic boundaries. Internal linking decisions were made manually as well, ensuring every link served a structural purpose within its cluster.

No external tools were used for backlink analysis because this case study focuses on architecture, content, and entity signals – not link acquisition. All conclusions are based on observable data and on the search system’s behaviour during the first 40 days after the rebuild.

What Comes Next: The Path to Global Authority

The next 6–12 months are about compounding what the foundation has already earned:

- Strengthening authority in Tier 2 markets and pushing into top-3 positions

- Breaking into the top 10 in the US, UK, Canada, and Germany

- Expanding the research ecosystem with deeper diagnostic frameworks

- Building brand signals that reinforce entity authority across platforms

- Acquiring high-quality, contextually relevant backlinks

- Reinforcing entity presence across LinkedIn, Medium, and industry publications

The foundation is in place. The trajectory is clear. The site is behaving like a domain that will become a global reference in its niche. The next case study will be more interesting than this one.

Frequently Asked Questions

Yes. One of the most persistent misconceptions in modern SEO is that ranking is primarily driven by backlinks or publishing frequency. In reality, search systems evaluate the depth, structure, and internal coherence of the content itself long before external authority signals accumulate.

Every article on the rebuilt site was written as a diagnostic asset rather than a generic blog post. That means clear definitions, consistent terminology, structured frameworks, and internal links that reinforce the semantic cluster the page belongs to. The goal was not simply to “cover a topic” but to explain it in a way that demonstrates genuine expertise.

Search systems are increasingly capable of detecting that distinction. When content introduces original frameworks, maintains semantic consistency across the site, and integrates naturally into a broader knowledge architecture, it sends strong expertise signals even on a new domain.

This is why the site was able to reach global page-1 rankings while still having less than half of its pages indexed and without relying on new backlinks. The authority signal was embedded in the content itself.

In other words, proper SEO is not decoration added to content – it is the structure that makes the content intelligible to search systems in the first place.

Authority engineering is the practice of intentionally designing a website’s architecture, content depth, entity signals, and semantic structure to accelerate Google’s trust in the domain – rather than waiting passively for authority to accumulate through link acquisition alone. The approach prioritises system clarity and diagnostic expertise over volume and generic coverage.

Migration preserves structural assumptions – and in this case, the structural assumptions were wrong. The old site was static HTML with no semantic clustering, no CMS, and no scalable content system. Carrying those constraints forward into a new build would have limited the result. A full reset allowed me to design the architecture correctly from the first URL rather than inheriting decisions that no longer served the advisory identity I was building.

Google indexes a representative sample first, evaluates the quality of that sample, and then expands indexing as trust is established. Because the indexed content was diagnostically deep, semantically clean, and internally well-connected, Google was able to form a high-confidence view of the domain’s authority and topical boundary very quickly. The unindexed content did not dilute that signal – it was simply waiting its turn.

Entity consistency was one of the most decisive factors. When Google and LLMs can verify your identity, expertise, and terminology across your own site and across external platforms simultaneously, they form a confident entity profile very quickly. That confidence accelerates trust signals across the entire domain, not just on individual pages. Ambiguity, by contrast, delays trust – sometimes permanently.

No. The rebuild produced no new backlinks during the 40-day window. The rankings were driven entirely by architecture quality, semantic depth, diagnostic content, entity clarity, and technical performance. This confirms that link acquisition, while valuable for long-term authority, is not the first variable Google evaluates when encountering a clean, expert-driven system.

Yes, with appropriate scaling. The principles – clean architecture, semantic clustering, diagnostic content, entity consistency, zero cannibalization – apply equally to a five-page advisory site and a 50,000-page enterprise domain. The implementation complexity differs, but the diagnostic logic is the same. If anything, the gains at enterprise scale are larger because the structural problems in most enterprise sites are more severe and the competitive opportunity in correcting them is proportionally greater.

The timeline varies with the size of the domain, the depth of existing structural problems, and how quickly the organisation can implement recommendations. For a clean rebuild like the one described here, 40 days is achievable because there is no legacy debt to work through. In a typical enterprise environment – with hundreds of thousands of URLs, competing internal priorities, and legacy CMS constraints – a meaningful authority shift usually becomes visible within 3–6 months of sustained, properly sequenced implementation.

Solving for symptoms rather than the system. Most enterprise SEO teams focus on individual page optimisations, keyword gaps, or link metrics – without ever addressing the structural architecture that determines how Google understands the domain as a whole. Authority engineering starts from the system level and works downward, which is the reverse of how most organisations approach the problem. This is the diagnostic gap I documented in my research on how enterprise teams misread data.