Over the last few years, I’ve watched companies double down on content production, brand campaigns, and paid performance – while their underlying search infrastructure was quietly deteriorating.

No alarms.

No dramatic crashes.

Just gradual erosion.

And leadership often doesn’t see it.

Because technical integrity rarely shows up in executive dashboards.

But in today’s environment – shaped by AI-driven results, entity understanding, and generative summaries – technical integrity is no longer a backend concern.

It’s a business risk.

Search Is No Longer a Channel. It’s Infrastructure.

Search used to be treated as a traffic source. A lever. A marketing channel.

That model is outdated.

Today, search functions as a visibility layer across the entire digital decision-making journey:

- Brand discovery

- Category exploration

- Competitive comparison

- AI-generated summaries

- Direct demand capture

- Market education

This layer influences perception before a user ever clicks.

If the layer is structurally compromised, influence weakens – even when campaigns keep running.

That’s why crawl diagnostics and indexation audits are not “technical housekeeping.”

They are structural risk management.

The Invisible Erosion Inside Enterprise Websites

In complex organizations, digital ecosystems evolve faster than governance.

- New templates launch without SEO validation

- Legacy pages accumulate

- Internal linking becomes inconsistent

- Faceted filters generate indexable URLs

- Migrations leave canonical conflicts behind

- Regional expansions fragment semantic signals

Over time, this creates:

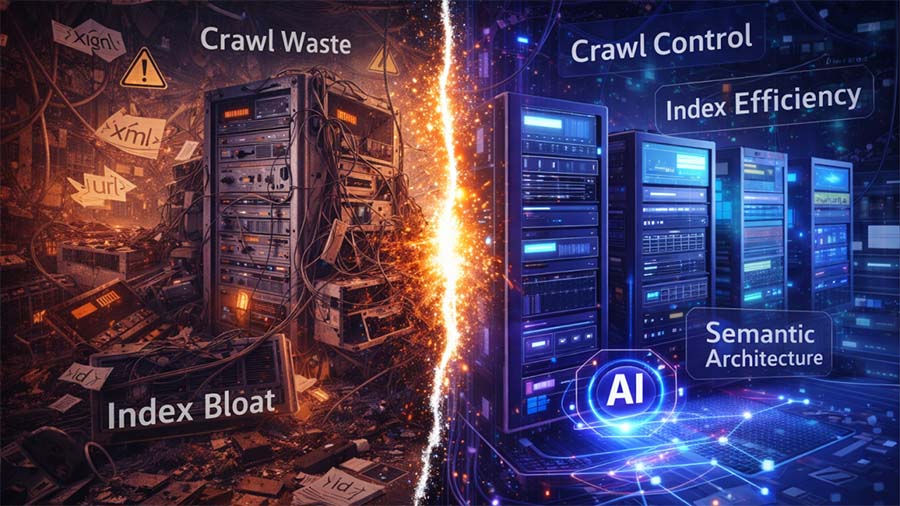

- Index bloat

- Crawl waste

- Diluted authority signals

- Canonical inconsistencies

- Competing keyword clusters

- Fragmented topical authority

From the outside, everything looks stable.

From the inside, search engines – and increasingly AI systems – struggle to interpret the architecture clearly.

When interpretation weakens, visibility declines.

Not dramatically. Silently.

This pattern of decline is not random – it reflects what I call structural decay in enterprise SEO.

Why This Is a Management-Level Concern

Technical instability rarely triggers an immediate crisis.

Instead, it manifests as:

- Slower organic growth

- Increased volatility after core updates

- Reduced recovery capacity

- Difficulty scaling new initiatives

- Weak AI citation presence

When executives ask:

“Why are we not gaining momentum despite increased investment?”

The root cause is often structural.

You cannot scale authority on unstable foundations.

You cannot expect AI systems to confidently reference your brand if your architecture lacks clarity.

This is not about optimizing meta tags. This is about protecting digital equity.

AI Systems Are Less Forgiving Than Blue Links

In the era of classic search results, strong backlinks and aggressive content production could sometimes compensate for technical inefficiencies.

AI-driven systems operate differently.

Large language models and search-integrated AI rely heavily on:

- Clear entity relationships

- Strong topical clustering

- Consistent internal linking signals

- Structured data clarity

- Logical information hierarchy

- Clean index signals

If your infrastructure is fragmented, AI will synthesize the market narrative without you – or with diluted representation.

That is not just a traffic problem. It is a positioning problem.

This is exactly why an AI search readiness audit should precede aggressive content scaling.

Technical SEO in the AI Era: What “Integrity” Really Means

Technical integrity today means:

- Every indexed page has a defined purpose

- Crawl budget aligns with business priorities

- Internal linking reinforces strategic clusters

- Canonical logic is consistent and intentional

- Structured data reflects real entity relationships

- Orphan pages are eliminated

- Thin or duplicative content is controlled

- Architecture signals topical authority clearly

When this foundation is strong:

- Content scales efficiently

- AI systems interpret you correctly

- Authority compounds

- Volatility decreases

- Growth becomes predictable

When it isn’t:

- Performance becomes unstable

- Investment efficiency drops

- AI visibility remains inconsistent

A proper indexation and crawl diagnostic often reveals where visibility erosion truly begins.

The Governance Questions Leaders Should Be Asking

Instead of reacting to traffic drops, management teams should ask:

- Is our index footprint intentional and controlled?

- Are we managing crawl behavior strategically?

- Does internal linking reinforce our commercial priorities?

- Is our architecture aligned with entity-based search models?

- Are we structurally prepared for AI summarization systems?

- Do we have a visibility governance framework – or just tactical execution?

These are not developer-only questions.

They are executive governance questions.

The Shift: From Optimization to Visibility Engineering

We are no longer optimizing pages.

We are engineering visibility systems.

Search algorithms evolve. AI interfaces change. SERPs transform.

But structural clarity remains constant.

Organizations that treat technical SEO as maintenance will remain reactive.

Organizations that treat it as structural risk management will build resilient visibility architectures that withstand:

- Core algorithm updates

- AI-driven result changes

- Market expansion

- Content scaling

- Competitive pressure

Final Thought

Search is no longer a traffic lever.

It is a visibility infrastructure layer influencing how markets perceive you.

Influence is not accidental.

It is engineered.

And engineering requires integrity at the core.

If your organization is scaling content, entering new markets, or preparing for AI-driven search environments, the real question is not: “How do we rank higher?”

It is: “Is our visibility system structurally sound?”

Because visibility, once fragmented, is expensive to recover.

But when architected intentionally, it compounds.

Leave a Reply

You must be logged in to post a comment.