AI Visibility Inspector: Why Your Best Pages Are Being Ignored by AI

Table of contents

- AI Visibility Inspector: Why Your Best Pages Are Being Ignored by AI

- What the AI Visibility Inspector Actually Does

- The Problem Most Enterprise Teams Are Not Seeing

- What Makes a Page Invisible to AI

- What You Get From a Diagnostic

- The Layer Most Visibility Strategies Miss

- What Changes When Interpretability Improves

- Who This Is For

- Run a Diagnostic on Your Content

- Frequently Asked Questions

Most enterprise websites I audit share the same paradox.

The content is strong. The writing is clear. The pages are technically sound. And still, when I run those same pages through AI search environments like Google’s AI Overviews, ChatGPT, or Perplexity – they simply don’t appear.

Not because they lack authority. Not because the topic is wrong. Because the content isn’t interpretable.

That is the problem the AI Visibility Inspector was built to solve. It is a Chrome plugin I developed – after years of diagnosing exactly this gap inside global enterprises – that identifies why specific pages fail to appear in AI-generated answers, even when every traditional SEO signal says they should.

What the AI Visibility Inspector Actually Does

The AI Visibility Inspector is a diagnostic tool that evaluates how well a page can be understood, interpreted, and used by AI systems at the moment of content extraction.

It does not rewrite your content. It does not generate anything automatically. Instead, it answers one question that most teams are not yet asking:

Can an AI system clearly understand what this page is, who it is for, and why it matters?

Because if the answer is unclear, no amount of ranking optimization will save the page. In AI-driven search, interpretability is the prerequisite – not the finishing touch.

The Inspector runs directly in your browser, so it can analyze any page in real time, whether it is your own content or a competitor’s. We get a structured diagnostic view of where the interpretation breaks down, and a prioritized set of recommendations to fix it.

The Problem Most Enterprise Teams Are Not Seeing

Traditional SEO operates on a well-tested model. You optimize the content, build the authority, fix the technical issues, and visibility follows. That model is no longer complete.

AI systems do not read pages the way human readers do. They do not “appreciate” quality in the traditional sense. What they do is scan for structured signals they can extract with confidence: defined entities, attributable expertise, clear topical scope, and extractable claims. When those signals are absent – or ambiguous – the content gets passed over, regardless of how well-written it is.

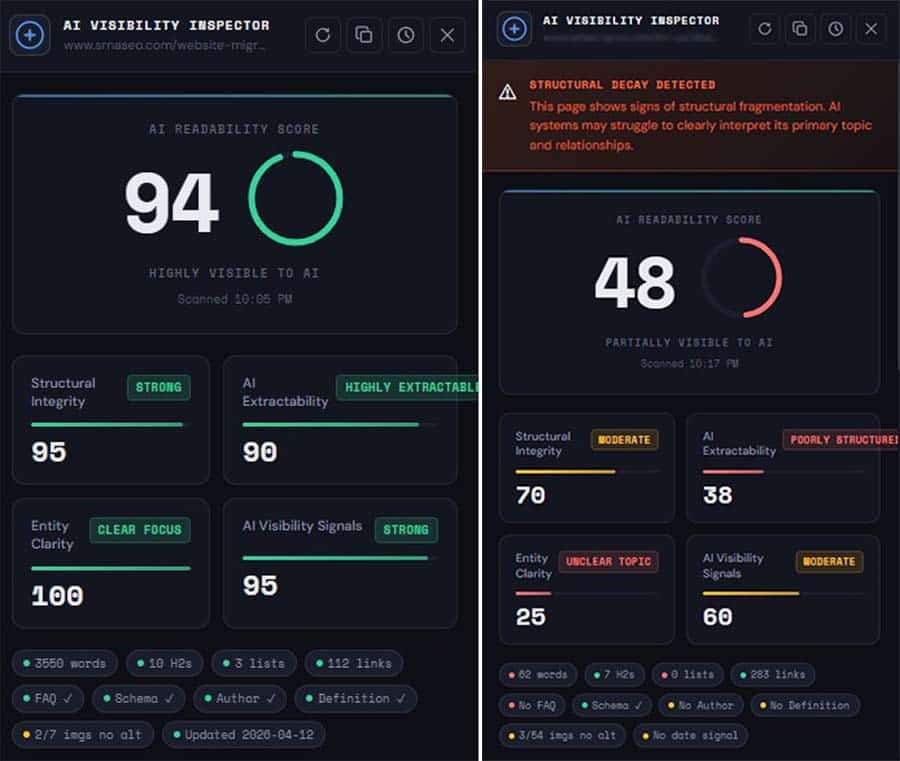

I have seen this pattern repeatedly across enterprise accounts. A page ranks on page one in Google. The content is authoritative. The brand is recognized. And yet, in AI-generated answers on the same query, the page is invisible. Competitors with thinner content, but stronger structural clarity, get cited instead.

This is the gap most SEO and content teams have not yet closed. And for good reason – until recently, there was no systematic way to diagnose it.

The cost of not addressing this is measurable. As AI-driven search continues to claim a growing share of zero-click decisions at the top of the funnel, brands that are not extractable from those systems effectively do not exist in that channel. For enterprise organizations with significant content investment already in place, that is not a future risk. It is a current one.

What Makes a Page Invisible to AI

To understand what the Inspector evaluates, it helps to understand what makes AI systems ignore a page in the first place.

Most AI retrieval failures fall into one of four structural categories.

Structural ambiguity. The page does not signal its own organization clearly enough for an AI system to build a reliable map of what is covered and where. Good writing does not fix this. Logical hierarchy, clear segmentation, and consistent formatting patterns do.

Low extractability. Key information is buried in narrative prose without the structural markers – definitions, direct statements, named claims – that allow AI systems to lift content confidently and accurately into a generated response.

Entity underspecification. The page references topics, concepts, and people without making their relationships explicit. AI systems work with entities and their connections. When those connections are assumed rather than stated, the page becomes ambiguous to interpret.

Missing attribution signals. AI systems apply a form of credibility filtering. If expertise is not explicitly connected to the content through author attribution, organizational context, or verifiable credentials, the page is less likely to be surfaced as a trusted source in generated answers.

These are not theoretical signals. They are observable, testable, and consistently measurable across pages. The Inspector surfaces each one with a clear rating and a concrete recommendation.

What You Get From a Diagnostic

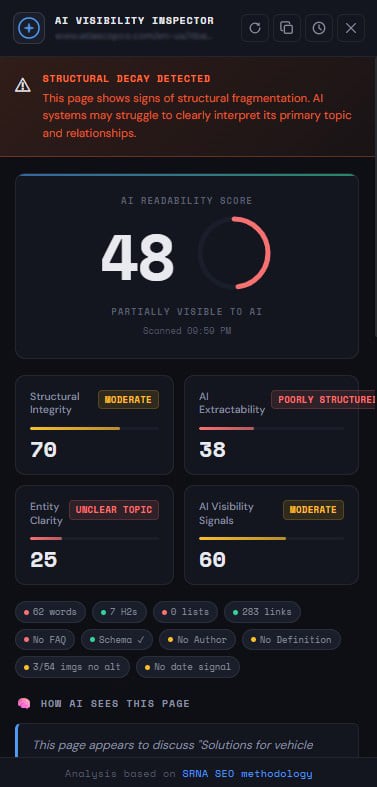

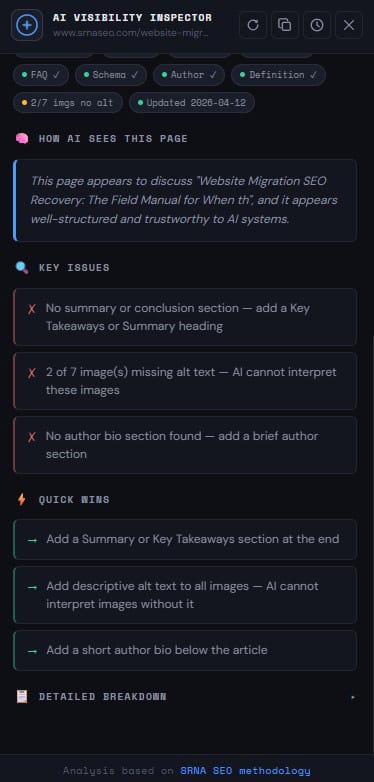

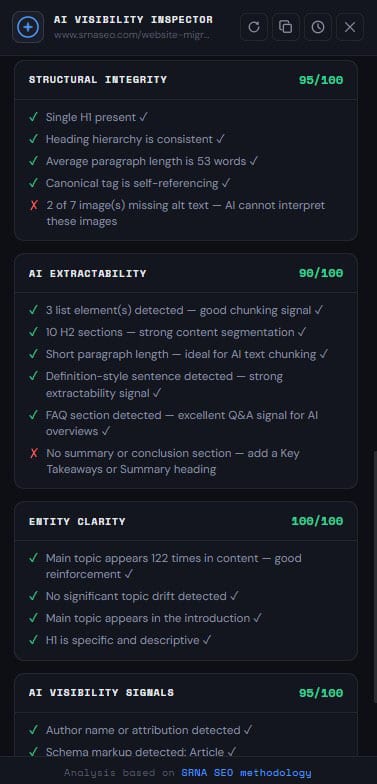

Each diagnostic the Inspector produces gives us a structured view of how a specific page performs from an AI interpretation perspective.

This includes a scored evaluation across the four core dimensions: structural clarity, extractability, entity definition, and attribution. It also includes a prioritized gap list – the specific signals that are either absent or insufficient – and a recommended sequence for fixing them.

The goal is not to generate a score for its own sake. The goal is to show you precisely why a page that appears fully optimized by traditional standards is still not being used in AI-generated answers.

Organizations that implement these recommendations consistently see meaningful improvements in AI citation rates within two to three content update cycles. Based on patterns I have observed across multiple enterprise environments, resolving extractability and entity definition gaps alone tends to produce the fastest gains – often within weeks of implementation, not quarters.

The cost of skipping this layer, on the other hand, compounds over time. Every piece of content that goes to production without interpretability review requires remediation later. At enterprise publishing velocity – two, five, ten articles per week – that backlog grows quickly.

In many cases, low AI visibility is not caused by a single issue, but by a combination of weak SEO signals that reduce clarity and confidence in the content.

The Layer Most Visibility Strategies Miss

There is an important distinction in how most teams approach AI search visibility, and it is worth making explicit.

Most conversations in this space focus on citations, mentions, and external signals. Which sites are ChatGPT citing? Which pages are appearing in AI Overviews? How do we get our brand mentioned?

Those questions matter. But they operate at the level of selection – which content gets chosen once AI systems are already evaluating options.

The Inspector operates one step earlier, at the level of eligibility.

Fixing eligibility before chasing selection is the more efficient path. It is also the one most organizations have not yet started.

Before a page can be selected, it must be interpretable. And in most enterprise content environments I have assessed, that is exactly where the breakdown happens. The page is technically accessible. The content is genuinely valuable. But the interpretability layer – the structural and entity signals that allow AI systems to extract and attribute content with confidence – is missing or inconsistent.

What Changes When Interpretability Improves

In testing the Inspector across my own content and client pages, the pattern is consistent.

When entity definition is made explicit, when structural segmentation is clear, and when attribution signals are properly connected to the content, AI systems begin to recognize and reference that content reliably. Not because the content suddenly became more authoritative. Because it became interpretable.

That shift tends to happen faster than most teams expect. AI systems are not waiting for quarterly algorithm updates. They are evaluating content every time a query is processed. When you fix the interpretability layer, the impact on AI citation rates is measurable within the same content cycle.

The implication for enterprise teams is significant. Content you have already invested in – articles, pillar pages, resource hubs – can be made eligible for AI retrieval without a full rewrite. The structural and entity signals can be added, clarified, or strengthened without changing the core narrative. That is a high-value, low-disruption intervention for organizations with substantial content archives.

For further context on how AI systems evaluate content structure, I covered the foundational signals in detail in SEO Foundation for AI Retrieval and expanded on entity-level optimization in Entity-Based SEO. Both are directly relevant to what the Inspector measures.

Who This Is For

The AI Visibility Inspector is built for professionals who are already operating at a high level and want to close a specific, measurable gap.

That includes enterprise SEO teams navigating the shift to AI-driven search, where the tools and frameworks from the traditional SEO stack are necessary but no longer sufficient. It includes content teams producing high-quality material that consistently underperforms in AI-generated results despite meeting every conventional quality bar. And it includes SEO managers, Heads of Digital, VPs, and C-suite leaders who want to understand – with evidence – why visibility is not matching content investment.

It is especially valuable in environments where the quality of content is genuinely high, but the outcomes in AI search do not reflect that quality. That gap is almost always an interpretability problem, not a quality problem.

If your team is already tracking AI citations through tools like Profound, Otterly, or Semrush’s AI features, the Inspector works as a complementary diagnostic layer. Those tools tell you whether you are appearing. The Inspector tells you why you are not – and what to fix.

You can learn more about how I approach AI search readiness architecture in AI Search Readiness and the structural design principles behind sustainable visibility in Visibility Strategy and System Design.

Run a Diagnostic on Your Content

If you want to understand how your pages perform in AI-driven search environments – and why specific content is not appearing despite strong traditional SEO – the Inspector gives you that answer at the page level, in real time.

For teams evaluating multiple pages or integrating interpretability review into a broader content workflow, I also offer structured advisory engagements. These are designed for enterprise SEO and content organizations that want to build this capability internally, not just audit once and move on.

Frequently Asked Questions

The AI Visibility Inspector is a Chrome plugin that diagnoses how well a specific page can be understood, interpreted, and used by AI systems. It evaluates structural clarity, extractability, entity definition, and attribution signals, then produces a prioritized diagnostic report identifying exactly where AI interpretation breaks down.

Most AI SEO audits focus on external signals – whether your brand is cited, how often it is mentioned, and what sources AI systems are using. The Inspector operates at the content and structure level, evaluating the page’s internal interpretability before those selection decisions are made. It is a diagnostic of eligibility, not a tracker of outcomes.

No. Traditional SEO tools remain essential for tracking rankings, technical health, and backlink profiles. The Inspector fills a gap that those tools do not cover: how AI systems parse, extract, and interpret content at the page level. It is a complementary diagnostic layer, not a replacement.

Pages that already carry strong content but underperform in AI-generated answers are the highest-value candidates. That includes pillar pages, thought leadership articles, product and service pages, and author or expert hub pages. Any page that should be a natural source for AI-generated responses on a given topic, but is not, is a strong candidate for an interpretability diagnostic.

In most cases, improvements in AI citation rates are observable within two to three content update cycles. AI systems evaluate content continuously, so structural and entity improvements take effect faster than traditional SEO changes, which depend on crawl schedules and index updates. Entity definition and extractability fixes tend to produce the fastest gains.

The primary cost is compounding invisibility in AI-driven search channels. As AI-generated answers claim a larger share of zero-click decisions at the top of the funnel, content that is not eligible for AI retrieval effectively does not exist in that channel. The rate of AI-generated answers is increasing, with 50% of queries now triggering Google AI Overviews responses in the United States. Additionally, 61% of organic click-through rates (CTR) have dropped due to AI-generated answers, indicating a significant shift in user behavior towards AI-assisted responses, according to First Page Sage. For enterprise organizations with significant content archives, the remediation backlog grows with every new piece published without interpretability review. The longer the delay, the larger the gap to close.

Yes. The structural and entity signals the Inspector evaluates affect how content is interpreted across all AI systems that use content retrieval – including ChatGPT, Perplexity, Claude, and any other LLM-based answer engine. The diagnostic is not platform-specific.

Entity definition refers to how clearly a page specifies the people, organizations, concepts, and topics it covers, and how explicitly it connects them. AI systems build understanding from entities and their relationships. When those relationships are implied rather than stated, the content becomes difficult to interpret reliably. The Inspector evaluates whether entities are named, defined, and connected in ways that AI systems can extract with confidence.

Yes. Because the Inspector runs as a Chrome plugin, it can analyze any publicly accessible page. Evaluating competitor pages can surface structural and entity practices that may explain why they appear in AI-generated answers more consistently than your own content.

The Inspector addresses the interpretability layer – the foundational prerequisite for AI search visibility. It fits before citation tracking, external signal building, and content promotion. The logical sequence is: make content interpretable first, then optimize for selection, then track and refine. Most teams are working on the second and third stages while the first remains unaddressed.

Ivica Srncevic

Enterprise SEO strategist specializing in search architecture and AI-driven visibility. With 25+ years of experience across global organizations including Adecco Group and Atlas Copco, he works on designing, diagnosing, and optimizing how complex digital ecosystems are structured, understood, and surfaced by search engines and AI systems.